Stop Delegating to AI. Start Collaborating With It

Collaborative artificial intelligence in marketing means treating AI as a working partner rather than a task executor. The distinction matters more than most frameworks acknowledge: when AI is collaborative, human judgment shapes the output at every stage, not just at the end when something has already gone wrong.

Most marketing teams are not doing this. They are either over-delegating, handing AI a brief and accepting whatever comes back, or under-using it, running AI in isolation from real strategic work. Neither approach produces compounding value. A structured collaboration model does.

Key Takeaways

- Collaborative AI means human judgment is embedded throughout the process, not applied as a final check after the fact.

- Most marketing teams sit at one of two failure modes: over-delegating to AI or keeping it too isolated to generate real value.

- A four-stage collaboration framework, covering framing, generation, evaluation, and integration, prevents both failure modes.

- AI performs best when it is given strategic context, not just task instructions. The quality of your input determines the quality of the output.

- The teams getting the most from AI are not the ones with the most tools. They are the ones with the clearest process for how humans and AI divide responsibility.

In This Article

- What Does Collaborative AI Actually Mean in a Marketing Context?

- Why Most AI Workflows Break Down Before They Start

- A Four-Stage Framework for Collaborative AI in Marketing

- How to Apply This Framework Across Different Marketing Functions

- The Human Roles That Cannot Be Automated

- What Stops Teams From Building a Collaborative Model

- How to Start Building This Into Your Team’s Process

I have been thinking about this since early in my career, long before AI was part of the conversation. When I was starting out around 2000, I wanted to build a website for the business I was working at. The MD said no budget. So I taught myself to code and built it anyway. That experience shaped how I think about tools ever since: the tool is only as useful as the person operating it, and the person operating it needs to understand what they are trying to achieve before they touch anything. That principle applies to AI more than almost anything else I have encountered in 20 years of marketing.

What Does Collaborative AI Actually Mean in a Marketing Context?

The word “collaborative” is doing a lot of work in this conversation, and it is worth being precise. Collaborative AI is not a product category. It is a working model. It describes how humans and AI systems divide cognitive labour across a task, with each contributing what they are genuinely better at.

AI is better at speed, pattern recognition across large data sets, generating multiple options quickly, and maintaining consistency at scale. Humans are better at commercial judgment, reading context that is not in the data, understanding what a client or audience actually cares about, and knowing when a technically correct answer is strategically wrong.

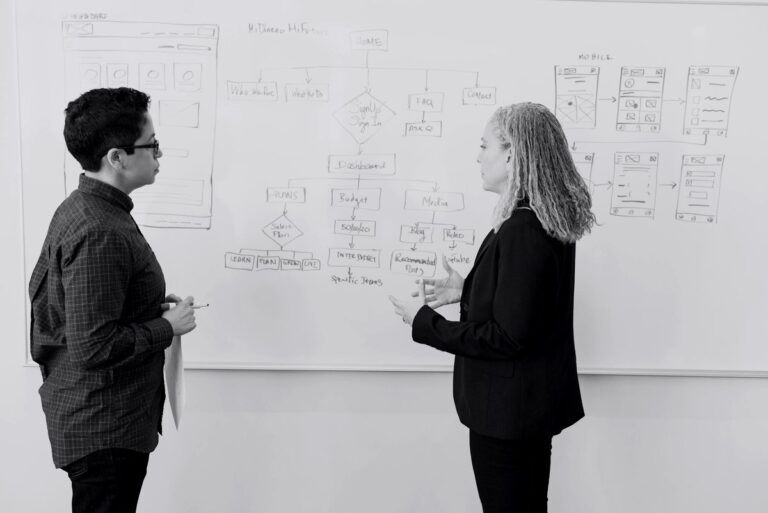

Collaborative AI means designing your workflow so that both sets of capabilities are active at the right moments. It is not about AI doing the thinking and humans approving. It is about humans framing the problem, AI generating options, humans evaluating against criteria that require judgment, and the combination producing something better than either could alone.

If you want a broader picture of where AI sits in marketing right now, the AI Marketing hub on The Marketing Juice covers the landscape across strategy, tools, and commercial application.

Why Most AI Workflows Break Down Before They Start

The most common failure I see is teams treating AI like a vending machine. You put in a prompt, you get out a deliverable. The problem is that a vending machine does not know what you actually need. It just responds to the input you gave it.

I ran agencies for a long time, and one of the things I learned early is that a brief is not just an instruction. A brief is a transfer of understanding. When a strategist briefs a creative team, the goal is not just to communicate a task. It is to transfer enough context, commercial awareness, and audience insight that the creative team can make good decisions independently when something unexpected comes up. AI needs exactly the same thing, and most people are not giving it.

The other common failure is the opposite: treating AI as a novelty that sits outside the real workflow. I have seen marketing teams spend months experimenting with AI tools in isolation, producing demos that impress in a meeting and then disappear into a folder. That is not collaboration. That is theatre. And marketing has enough theatre already.

Semrush has a useful overview of how AI is being applied across marketing functions if you want to map where your team currently sits against the broader adoption picture.

A Four-Stage Framework for Collaborative AI in Marketing

What follows is not a technology recommendation. It is a process model. It works across content, paid media, SEO, and campaign strategy, and it scales from a team of three to a team of three hundred. The four stages are: Frame, Generate, Evaluate, Integrate.

Stage 1: Frame

This is the stage most teams skip, and it is the stage that determines everything. Framing means defining the problem before you open any AI tool. What are you trying to achieve commercially? Who is the audience, specifically? What does success look like, and how will you measure it? What constraints exist, whether that is brand tone, regulatory requirements, or competitive positioning?

When I was managing hundreds of millions in ad spend across 30 industries, one of the things that separated the campaigns that worked from the ones that did not was almost always the quality of the brief, not the quality of the execution. Execution is a multiplier. If the brief is wrong, better execution just gets you to the wrong place faster.

For AI, the frame is your prompt architecture. It includes the context, the constraints, the audience, the goal, and the format. A good frame takes ten minutes to write. A bad one takes ten seconds and produces output you cannot use.

Stage 2: Generate

This is where AI contributes most visibly. Given a well-constructed frame, AI can generate options at a speed and volume that no human team can match. The important word is options. The goal of the generation stage is not to produce a finished output. It is to produce a set of candidates that a human can evaluate against strategic criteria.

In practice, this means asking AI for multiple variants rather than one answer. It means using AI to explore angles you would not have considered, not just to execute the angle you already had in mind. It means treating the generation stage as a divergent process, not a convergent one. You are expanding the option space before you start narrowing it.

This is where tools like the ones covered in HubSpot’s breakdown of AI writing tools become relevant. The specific tool matters less than how you are using it. Generation is a creative stage, and the frame you built in Stage 1 is what keeps it commercially grounded.

Stage 3: Evaluate

This is the stage where human judgment is non-negotiable. Evaluation means assessing the generated options against the criteria you defined in the framing stage, plus the tacit knowledge that did not make it into the brief.

Tacit knowledge is the gap that most AI frameworks do not account for. It is the understanding that a particular client will react badly to a certain tone, even if that tone is technically on-brand. It is knowing that a competitor just shifted positioning in a way that makes one of your generated options look derivative. It is recognising that an audience segment is more price-sensitive right now because of something happening in the economy that is not yet in any data set.

I judged the Effie Awards, and one of the things that experience reinforced is how much of what makes marketing effective is not visible in the brief or the output. It is in the judgment calls made along the way by people who understood the commercial context deeply. AI cannot replicate that yet. The evaluation stage is where humans protect the work from being technically correct but commercially wrong.

Stage 4: Integrate

Integration is where collaborative AI produces compounding value, and it is the stage most teams do not reach because they stop at evaluation. Integration means feeding the outcomes of one cycle back into the framing of the next. It means using what you learned from this campaign to improve the brief for the next one. It means building institutional knowledge into your AI process, not just using AI to execute tasks in isolation.

In agency terms, this is the difference between a team that gets better over time and a team that does the same work at the same standard indefinitely. When I grew the agency from 20 to 100 people, the teams that scaled well were the ones that had systems for capturing and applying what they learned. AI makes this faster and more structured, but only if you build the integration stage into your process deliberately.

Moz has a practical look at building AI tools into SEO workflows that illustrates what integration looks like in a specific channel context.

How to Apply This Framework Across Different Marketing Functions

The four-stage model is channel-agnostic, but the emphasis shifts depending on what you are doing.

In content marketing, the frame stage is where you define the audience problem, the search intent, and the angle that differentiates your content from what already exists. The generation stage produces drafts and structural options. The evaluation stage is where editorial judgment determines what is accurate, what is on-brand, and what will actually serve the reader. Moz covers the content side of this well in their piece on using AI tools in content writing.

In paid media, the frame stage is where you define the audience segment, the commercial objective, and the offer. The generation stage produces ad copy variants and creative concepts. The evaluation stage is where you apply your knowledge of what has worked historically and what the data is not capturing. Early in my career, I ran a paid search campaign for a music festival at lastminute.com and generated six figures of revenue in roughly a day from a relatively simple setup. What made it work was not the execution. It was the clarity of the commercial frame: the right audience, the right offer, the right moment. AI can accelerate that process significantly, but it cannot replace the commercial thinking that makes it land.

In SEO and organic visibility, the collaboration model is increasingly important as AI-driven search changes what visibility means. The frame stage needs to account for how AI systems are interpreting and surfacing content, not just how traditional search algorithms rank it. Ahrefs has a useful webinar on improving visibility in large language model environments that is worth reviewing if you are thinking about how this shifts the framing stage.

The Human Roles That Cannot Be Automated

There is a version of the AI conversation that treats human involvement as a temporary inconvenience on the way to full automation. I do not think that is where marketing is heading, and I do not think it would be desirable if it were.

Marketing is fundamentally about understanding what people want and communicating in a way that connects. Both of those things require human judgment, not because AI cannot generate plausible versions of them, but because the standard for “good enough” in marketing is not plausibility. It is commercial effectiveness. And commercial effectiveness requires understanding context that AI systems do not have access to: the client relationship, the competitive moment, the audience’s current state of mind, the business pressure that is shaping the brief.

The human roles that matter most in a collaborative AI model are: strategic framing, commercial evaluation, tacit knowledge application, and accountability. None of those are going away. What is changing is that the execution work between those judgment points can be done faster and at greater scale. That is a genuine productivity gain, but it is not a replacement for the judgment itself.

Predictive analytics and data mining have been part of marketing for a long time, and the principles around human oversight of data-driven processes are not new. MarketingProfs has an older but still relevant piece on data mining techniques in predictive analytics that illustrates how long the tension between automated analysis and human interpretation has existed in this industry.

What Stops Teams From Building a Collaborative Model

Three things, in my experience.

First, speed pressure. Teams are under pressure to produce more content, more campaigns, more output, faster. AI looks like a shortcut, and the temptation is to use it as one. Shortcuts skip the framing stage, and skipping the framing stage produces volume without value. I have seen this pattern repeat across agencies and in-house teams alike. More output does not mean better results. It often means more noise.

Second, unclear ownership. In a collaborative model, someone needs to own each stage. Who is responsible for the frame? Who does the evaluation? Who manages the integration back into the next cycle? When those roles are not defined, the process collapses into whoever has time, which usually means the generation stage gets done and everything else gets skipped.

Third, tool proliferation without process design. Marketing teams are accumulating AI tools faster than they are building the processes to use them well. The tool is not the framework. The framework is how you use the tool, when, and with what human input at each stage. Semrush has a solid overview of what AI marketing actually covers that helps teams map their tool landscape against real use cases.

How to Start Building This Into Your Team’s Process

Start with one workflow, not the whole operation. Pick a content type or a campaign format that your team runs regularly, and map the four stages explicitly for that workflow. Write down what the frame should include, who generates, who evaluates against what criteria, and how the learning gets captured for next time.

Run it for four to six cycles before you assess whether it is working. The first cycle will be slow because you are building the process as you go. By the fourth cycle, the frame template should be faster to complete, the generation stage should produce better options because the frame is clearer, and the evaluation stage should be more consistent because the criteria are written down.

Then scale it to a second workflow. And then a third. Build the collaborative model incrementally rather than trying to redesign everything at once. The teams I have seen get the most from AI are not the ones who launched the biggest transformation programme. They are the ones who built one solid process, proved it worked, and then extended it methodically.

For more on how AI is reshaping marketing strategy and execution, the AI Marketing section of The Marketing Juice covers the commercial and strategic dimensions in depth, from automation investment trends to how agencies are restructuring around these tools.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.