Predictive Behavioral Analytics: What It Can and Cannot Tell You

Predictive behavioral analytics uses historical data, statistical modeling, and machine learning to forecast how users are likely to behave in the future, whether that is converting, churning, clicking, or abandoning. When it works well, it gives marketing teams a meaningful head start on decisions that would otherwise be reactive. When it is oversold, it becomes expensive infrastructure that produces confident-looking numbers with questionable commercial value.

The distinction matters more than most vendors will admit.

Key Takeaways

- Predictive behavioral analytics forecasts future user actions from historical patterns, but the quality of those forecasts depends entirely on the quality and relevance of the underlying data.

- Most teams underinvest in data hygiene and overinvest in model sophistication, which is the wrong order of priorities.

- Predictive models reflect past behavior in past conditions. When market conditions shift, models trained on old data can actively mislead you.

- The commercial value of predictive analytics comes from acting on its outputs, not from building or buying the models themselves.

- Propensity scoring, churn prediction, and next-best-action models are the three applications with the clearest commercial track record in marketing.

In This Article

- What Predictive Behavioral Analytics Actually Does

- The Three Applications With the Best Commercial Track Record

- Where the Data Actually Comes From

- The Model Drift Problem Nobody Talks About Enough

- How to Integrate Predictive Outputs Into Campaign Decisions

- What Predictive Analytics Cannot Tell You

- Building the Business Case for Predictive Analytics Investment

What Predictive Behavioral Analytics Actually Does

Strip away the vendor language and predictive behavioral analytics does one thing: it looks at patterns in what people have done and uses those patterns to estimate the probability of what they will do next. That is it. There is no magic in the methodology, only math applied to behavioral signals.

The behavioral signals vary by context. In e-commerce, they might include browsing depth, category affinity, time between sessions, and cart activity. In B2B SaaS, they might be login frequency, feature adoption rates, and support ticket volume. In financial services, they might be transaction patterns, product holdings, and digital engagement scores. The signals are different. The underlying logic is the same: past behavior predicts future behavior, within limits.

Those limits are where things get interesting, and where a lot of marketing teams get into trouble.

I spent several years running agency teams that worked with financial services clients, and the sector has been building predictive models longer than most. BCG published useful work on how financial institutions use data analytics to drive commercial outcomes, and the pattern they identified holds across industries: the organizations that get the most value from predictive analytics are the ones that treat it as a decision-support tool, not a decision-replacement tool. The distinction sounds semantic. It is not.

The Three Applications With the Best Commercial Track Record

Not all use cases for predictive behavioral analytics are equally mature or equally useful. Three stand out as having clear commercial applications with a reasonable track record across industries.

Propensity Scoring

Propensity scoring assigns a probability score to each user or prospect based on how likely they are to take a specific action, typically a purchase, a sign-up, or a form completion. It is one of the oldest applications of predictive modeling in marketing and, done well, one of the most commercially useful.

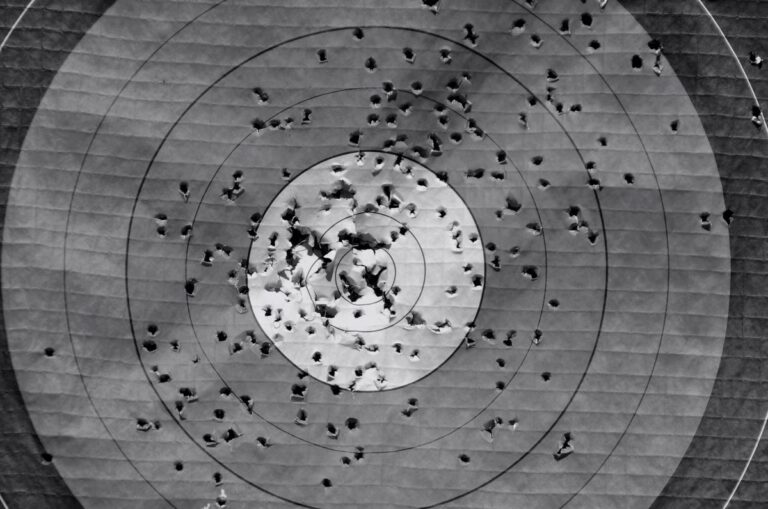

The practical application is straightforward: you use propensity scores to prioritize media spend, sales outreach, or personalization effort toward the users most likely to convert. Instead of treating all prospects equally, you concentrate resource on the ones the model says are worth concentrating on. At scale, even a modest improvement in targeting accuracy compounds into meaningful cost efficiency.

The trap is over-relying on the score without understanding what is driving it. A high propensity score might reflect genuine purchase intent. It might also reflect the fact that the user recently visited your pricing page, which itself might reflect competitive research rather than buying intent. The score is a signal, not a verdict.

Churn Prediction

Churn prediction identifies customers who are showing behavioral patterns associated with disengagement or cancellation before they actually leave. For subscription businesses, this is commercially critical. Retaining an existing customer is almost always cheaper than acquiring a new one, and if you can identify at-risk customers early enough to intervene, you have a real commercial lever.

The behavioral signals that predict churn tend to be consistent across categories: declining login frequency, reduced feature usage, shorter session durations, unsubscribes from product communications, and increased support contact. When several of these appear together in a short window, the model flags the account.

What happens next is where most organizations fall down. The model works. The intervention does not. A generic retention email sent to a churning customer at the moment they have already decided to leave is not a retention strategy. It is theater. The value of churn prediction comes from having a credible, differentiated response ready before the customer reaches the point of no return.

Next-Best-Action Modeling

Next-best-action modeling goes a step further than propensity scoring. Instead of predicting whether someone will convert, it predicts which specific action, offer, or message is most likely to move that individual forward in a commercially useful direction. It is the application most associated with personalization at scale.

In practice, it requires more data infrastructure and more organizational alignment than most teams have. You need clean behavioral data, a way to test and validate model outputs, and the operational capability to actually deliver differentiated experiences across channels. Most teams have one or two of those things. Very few have all three.

If you are building your analytics practice from the ground up, there is useful context in the broader marketing analytics hub on how these tools fit into a wider measurement framework. Predictive modeling is one layer of a larger system, not a standalone solution.

Where the Data Actually Comes From

Predictive behavioral analytics is only as good as the behavioral data feeding it. This sounds obvious. It is consistently underestimated.

The most common data sources are on-site behavioral data from analytics platforms, CRM data capturing purchase and engagement history, email and marketing automation engagement data, and, where available, first-party data from logged-in user journeys. Each source has gaps. Combining them creates new gaps at the joins.

When I was growing an agency team from around 20 people to closer to 100, one of the recurring problems we ran into with clients was that their data infrastructure looked more complete than it was. They had Google Analytics. They had a CRM. They had an email platform. On paper, they had everything needed to build a predictive model. In practice, the three systems were not talking to each other in any meaningful way, the CRM data was inconsistently populated, and the analytics implementation had enough tracking gaps to make behavioral analysis unreliable. The model would have been sophisticated. The outputs would have been nonsense.

Tools like Hotjar integrated with Google Analytics can help close some of the gaps between quantitative behavioral data and qualitative intent signals, but they do not solve the underlying data quality problem. That requires someone to actually audit what is being collected, where it is going, and whether it is accurate. Most organizations skip that step because it is unglamorous work.

A useful framing from MarketingProfs on preparing properly for web analytics makes the point that analytics failure is almost always a planning and preparation failure, not a technology failure. That observation is more relevant now than it was when it was written.

The Model Drift Problem Nobody Talks About Enough

Predictive models are trained on historical data. They learn what patterns preceded a given outcome in the past and use those patterns to score future behavior. This works well when the conditions that produced the training data are still the conditions you are operating in. It works poorly when they are not.

Model drift is what happens when the relationship between behavioral signals and outcomes changes over time. A model trained on pre-pandemic e-commerce behavior would have learned patterns that no longer held in 2020. A model trained on behavior during a period of low interest rates might not predict financial product decisions accurately in a high-rate environment. A model trained on behavior before a major competitor entered your market might score prospects based on patterns that no longer reflect how people make decisions in your category.

The problem is that a drifting model does not announce itself. It keeps producing scores. The scores look authoritative. The decisions made on the basis of those scores look data-driven. But the underlying assumptions are stale, and the outputs are gradually becoming less reliable without anyone noticing.

I judged the Effie Awards for a period, and one of the things that struck me reviewing submissions was how often teams presented data-driven campaigns with impressive-looking methodology and then buried the performance results in relative rather than absolute terms. The model was sophisticated. The commercial outcome was modest. The relationship between the two was never examined critically. Predictive analytics can produce the same dynamic at the campaign level: confident inputs, unexamined outputs.

The mitigation is not complicated but it requires discipline: validate model outputs against actual outcomes on a regular cadence, set a threshold for when a model needs retraining, and treat any significant change in market conditions as a trigger for review. Most teams do not do this because it requires someone to own it, and ownership of model validation tends to fall between data science and marketing with neither side claiming it.

How to Integrate Predictive Outputs Into Campaign Decisions

The gap between having a predictive model and getting commercial value from it is almost always an activation gap, not a modeling gap. The model tells you something. The question is what you do with it.

The most practical integration points are audience segmentation for paid media, trigger-based automation in CRM, and personalization logic for on-site or in-app experiences. Each of these requires the predictive output to be translated into something an activation system can act on, which typically means a score or a segment flag that can be passed between platforms.

For paid media, propensity scores can be used to build custom audiences or to adjust bidding by segment. High-propensity users get more aggressive bidding or more direct creative. Low-propensity users get either exclusion or upper-funnel messaging. The logic is simple. The execution requires clean data pipelines between your analytics stack and your media platforms, which is where most teams hit friction.

For CRM automation, churn scores or engagement scores can trigger workflows based on threshold values. A customer whose engagement score drops below a defined level enters a re-engagement sequence. A prospect whose propensity score crosses a threshold gets routed to sales. The model does not make the decision. It sets the conditions under which a pre-defined decision gets made.

For on-site personalization, next-best-action outputs can inform which content, offers, or calls to action a user sees. This is the most technically demanding integration and the one where A/B testing integrated with analytics becomes essential. You need a way to validate that the personalized experience is actually performing better than the default, not just that the model predicted it would.

Unbounce has written usefully about making marketing analytics actionable rather than just informative, and the principle applies directly here. A predictive model that produces outputs nobody acts on is an analytics cost, not an analytics asset.

What Predictive Analytics Cannot Tell You

There is a version of predictive behavioral analytics that gets oversold as a way to understand customers. It is not. It is a way to identify statistical patterns in how customers have behaved. Those are related but not the same thing.

Predictive models cannot tell you why someone behaves the way they do. They can tell you that users who visit the pricing page three times in a week are more likely to convert. They cannot tell you whether those users are close to buying, doing competitive research, or trying to figure out how to cancel. The model sees the signal. It cannot interpret the intent behind it.

They also cannot tell you how customers will respond to something they have never encountered before. If you launch a new product category, a new pricing model, or a new channel, your model has no training data for that scenario. It will extrapolate from the nearest available patterns, which may or may not be relevant.

Early in my career, I learned to code because I needed a website built and there was no budget to hire someone. That experience taught me something that has stayed with me across two decades of working with data and technology: understanding how a tool works at a mechanical level changes how you use it. When you understand that a predictive model is doing pattern matching on historical data, you stop treating its outputs as insight and start treating them as hypotheses. That shift in framing changes every downstream decision.

The same principle applies to integrating behavioral data with qualitative tools. Combining session recording and heatmap data with analytics does not make your predictive model more accurate, but it can help you understand whether the patterns the model is identifying reflect genuine behavioral intent or artifacts of your UX. That context is valuable precisely because the model cannot provide it.

Building the Business Case for Predictive Analytics Investment

If you are trying to make the case for predictive analytics investment internally, the framing matters. Pitching it as a capability play rarely works with commercially minded stakeholders. Pitching it as a cost efficiency or revenue protection play usually does.

The most credible business cases I have seen are built around one of three things: reducing wasted media spend by improving audience targeting, reducing churn by enabling earlier intervention, or increasing conversion rate by improving the relevance of what customers see. Each of these has a defensible financial model attached to it. Each requires you to be honest about the assumptions in that model.

The weakest business cases are the ones built on vendor benchmarks. Every analytics vendor has a case study showing a 30% improvement in conversion rate or a 25% reduction in churn. Those numbers come from the best implementations, in the best conditions, often with significant vendor support during the pilot phase. They are not a reliable guide to what you will achieve.

A more honest approach is to identify one specific decision that predictive analytics would improve, model the commercial value of improving that decision by a modest amount, and use that as the baseline for the investment case. If the math works on a conservative assumption, the investment is worth exploring. If it only works on an optimistic assumption, you should be cautious.

When I was at lastminute.com, I ran a paid search campaign for a music festival that generated six figures of revenue within roughly a day from a relatively simple setup. The lesson I took from that experience was not that complexity is bad. It was that commercial clarity about what you are trying to achieve matters more than methodological sophistication. The same principle applies to predictive analytics. Know what decision you are trying to improve. Build toward that. Evaluate against that.

For more on building a measurement framework that connects analytics to commercial outcomes, the marketing analytics section of The Marketing Juice covers the broader landscape, including how predictive tools fit alongside attribution, testing, and reporting infrastructure.

Predictive behavioral analytics is a genuinely useful capability when it is applied to the right problems with realistic expectations and honest validation. It is not a shortcut to understanding customers, and it is not a substitute for commercial judgment. Treat it as one input among several, validate its outputs rigorously, and build activation workflows that actually use what it produces. That is where the value is.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.