Ad Engagement Tracking: The Features That Connect to Revenue

The features worth caring about in ad engagement tracking are the ones that connect clicks and views to closed revenue, not the ones that make dashboards look impressive. Multi-touch attribution, revenue-linked conversion events, and cohort-level reporting are the tools that separate teams who understand their advertising from teams who are guessing with confidence.

Most platforms will show you engagement. Far fewer will show you what that engagement was worth.

Key Takeaways

- Engagement metrics only matter when they are mapped to revenue outcomes , clicks and impressions are inputs, not results.

- Multi-touch attribution models give you a more honest picture of how ad spend contributes to revenue across a buying experience, but no model is perfect and all require interpretation.

- Revenue-linked conversion events, set up with actual deal or transaction data, are more valuable than proxy metrics like form fills or page visits.

- Cohort analysis reveals how ad engagement drives revenue over time, which is critical for businesses with longer sales cycles or repeat purchase behaviour.

- The biggest measurement mistake is optimising toward the metric that is easiest to track rather than the one closest to commercial value.

In This Article

- Why Most Ad Tracking Stops Short of Revenue

- Multi-Touch Attribution: The Feature That Changes How You Read Performance

- Revenue-Linked Conversion Events: The Setup Most Teams Skip

- Cohort Analysis: Seeing Revenue Impact Over Time

- Incrementality Testing: Measuring What Advertising Actually Caused

- CRM Integration and Offline Conversion Tracking

- View-Through Attribution: Useful Signal, Dangerous Default

- Revenue-Per-Visitor and Engagement Quality Scoring

- The Reporting Stack That Connects It All

Why Most Ad Tracking Stops Short of Revenue

I spent years watching marketing teams celebrate metrics that had no relationship to business performance. Click-through rates that looked healthy while revenue flatlined. Engagement scores that climbed while the sales team complained about lead quality. Cost-per-click improvements that masked the fact that the traffic being attracted was never going to buy anything.

The problem is structural. Most ad platforms are built to show you what happened inside the platform. They are very good at that. Google will tell you someone clicked your ad. Meta will tell you someone watched 75% of your video. LinkedIn will tell you someone engaged with your sponsored content. What none of them will tell you, by default, is whether that person became a customer.

Closing that gap requires deliberate setup, the right features, and a willingness to live with imperfect data rather than precise-looking data that measures the wrong thing.

If you are thinking about how ad tracking fits into a broader commercial strategy, the go-to-market and growth strategy work on this site covers the wider picture of how measurement connects to growth decisions.

Multi-Touch Attribution: The Feature That Changes How You Read Performance

Last-click attribution is still the default in many businesses, and it is still wrong in most of them. It tells you which ad someone clicked immediately before converting. It tells you nothing about what influenced them before that click.

When I was running paid search at scale across multiple verticals, the last-click model consistently over-credited branded search terms and direct traffic, and under-credited the upper-funnel display and video activity that had built the brand awareness in the first place. The result was predictable: budget kept shifting toward branded terms because they looked efficient, while the campaigns doing the harder work of creating demand got cut.

Multi-touch attribution models, whether data-driven, linear, time-decay, or position-based, distribute credit across touchpoints in a buying experience. No model is perfect. Data-driven attribution requires volume to be statistically meaningful. Linear models treat a brand awareness impression as equivalent to a bottom-funnel click, which is a stretch. Position-based models make assumptions about which touchpoints matter most that may not reflect your actual customer behaviour.

The value is not in finding the objectively correct model. The value is in moving from a single, distorted view of performance to a more honest approximation of how your advertising is contributing to revenue across the full experience. That shift in perspective changes budget allocation decisions in ways that last-click attribution simply cannot support.

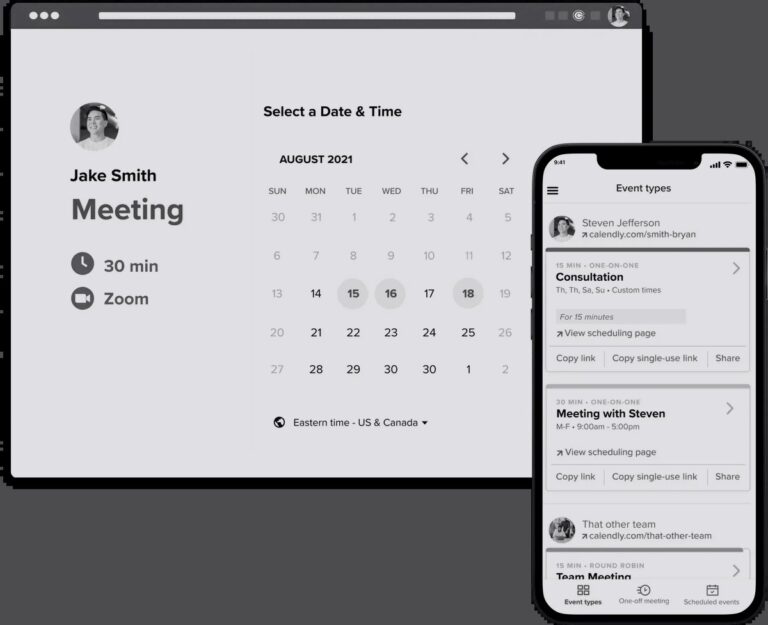

Features to look for: the ability to compare attribution models side by side, custom lookback windows that match your actual sales cycle, and the ability to import offline conversion data so that deals closed by a sales team can be credited back to the ad touchpoints that influenced them.

Revenue-Linked Conversion Events: The Setup Most Teams Skip

A conversion event is only as useful as what it represents. A form fill is not revenue. A demo request is not revenue. A free trial signup is not revenue. These are signals, and useful ones, but optimising your campaigns toward them without connecting them to actual commercial outcomes is how you end up with a full pipeline of people who never buy.

The feature that makes the difference here is the ability to pass revenue values back to your ad platforms, either at the point of conversion or after the fact when a deal closes. In e-commerce this is relatively straightforward: transaction values are passed at checkout. In B2B or high-consideration categories it is more complex, but it is not optional if you want accurate measurement.

At lastminute.com, one of the things that made paid search genuinely exciting was the directness of the feedback loop. A campaign went live, bookings came in, and revenue was visible within hours. That kind of immediate signal is rare, and it spoiled me slightly. Most businesses have a longer gap between ad engagement and closed revenue, which makes the setup of revenue-linked events more important, not less.

The practical setup involves integrating your CRM or e-commerce platform with your ad accounts, defining which events carry revenue signal (not just any conversion), and establishing a consistent methodology for how revenue is attributed when multiple campaigns touched a single buyer. This is not glamorous work, but it is the foundation that makes everything else meaningful.

Vidyard’s research into pipeline and revenue potential for go-to-market teams highlights how much commercial value remains invisible when teams lack the infrastructure to connect engagement signals to downstream revenue outcomes. The gap between engagement and recognised revenue is a structural problem, not a data problem.

Cohort Analysis: Seeing Revenue Impact Over Time

Cohort analysis is underused in paid advertising, and the reason is usually that it requires patience. You have to wait for revenue to materialise before you can measure it, which cuts against the instinct to optimise in real time.

But for businesses with longer sales cycles, subscription models, or repeat purchase behaviour, cohort analysis is one of the most revealing features available. It groups customers by the period in which they were acquired through advertising and tracks their revenue contribution over time. This tells you not just whether a campaign drove conversions, but whether those conversions were worth having.

I have seen businesses where the campaigns that looked cheapest on a cost-per-acquisition basis were acquiring customers who churned quickly or spent less. The campaigns with higher acquisition costs were bringing in customers with significantly better lifetime value. Without cohort analysis, you would cut the wrong campaigns every time.

The feature to look for is the ability to segment cohorts by acquisition source, campaign, or ad set, and then track revenue metrics for those cohorts over a defined period. Some platforms offer this natively. Others require you to export data and build the analysis in a BI tool. Either way, it is worth the effort.

Incrementality Testing: Measuring What Advertising Actually Caused

Attribution tells you which ads were present in a buyer’s experience. Incrementality testing tells you whether those ads caused the purchase, or whether the buyer would have converted anyway.

This is a meaningful distinction. Retargeting campaigns, for example, often show excellent attributed performance because they reach people who have already shown intent. But a significant portion of those people would have converted without seeing the retargeting ad. The ad is capturing credit for a decision that was already made. Incrementality testing, through holdout groups or geo-based experiments, isolates the causal effect of advertising from the correlation that attribution models can confuse with causation.

When I was judging the Effie Awards, one of the things that separated the strongest entries from the rest was precisely this kind of rigour. The teams that could demonstrate incrementality, that their campaign had caused commercial outcomes rather than simply been present when outcomes occurred, were consistently more credible and more persuasive. The industry talks about effectiveness, but the evidence standard for it is higher than most campaigns meet.

Features to look for: native holdout testing within your ad platform, the ability to define control and exposed groups at a meaningful scale, and reporting that surfaces the incremental revenue lift rather than just the attributed conversion volume. Meta’s Conversion Lift and Google’s Conversion Lift experiments are the most accessible versions of this for most advertisers.

BCG’s work on commercial transformation and go-to-market strategy makes a related point: the businesses that build durable commercial advantage are the ones that invest in understanding what is actually driving growth, not just what appears to be driving it.

CRM Integration and Offline Conversion Tracking

For any business where revenue does not close inside a web browser, offline conversion tracking is not optional. It is the mechanism that connects what happens in the real world, a signed contract, a phone sale, an in-store purchase, back to the ad engagement that contributed to it.

The setup requires passing hashed customer identifiers or click IDs from your ad platforms into your CRM, and then uploading conversion data back to the platform when a deal closes. This is technically straightforward in most modern stacks. The challenge is usually organisational: getting the sales team to record the right data, ensuring the CRM fields are mapped correctly, and maintaining the integration as systems change.

The payoff is substantial. When your ad platform can see which campaigns, audiences, and creatives are generating actual closed revenue rather than just leads, its optimisation algorithms become significantly more useful. You are training the machine on the outcome that matters, not a proxy for it.

Forrester’s analysis of how agile scaling affects go-to-market execution touches on a related challenge: as organisations grow, the distance between marketing activity and commercial outcomes tends to increase, and the measurement infrastructure rarely keeps pace. Offline conversion tracking is one of the more practical ways to close that gap.

Features to look for: native CRM integrations within your ad platform, support for enhanced conversions or advanced matching to improve data quality, and the ability to import conversion data with a delay that reflects your actual sales cycle rather than assuming all conversions happen at the moment of click.

View-Through Attribution: Useful Signal, Dangerous Default

View-through attribution credits a conversion to an ad that was shown but not clicked. The logic is that someone saw your ad, did not click, but later converted through another channel. The ad influenced the decision.

This is sometimes true. It is also one of the most abused features in digital advertising. A default 30-day view-through window on a display or video campaign will claim credit for an enormous number of conversions that had nothing to do with the ad. The person saw the ad, ignored it, and converted because of something else entirely. The platform still takes credit.

The feature itself is not the problem. The problem is using it without understanding what you are measuring. View-through attribution is most defensible for upper-funnel brand campaigns where the goal is awareness and the conversion event is genuinely downstream. It is least defensible for retargeting campaigns where the audience has already demonstrated intent and the view-through window is set generously.

The right approach is to treat view-through data as one signal among several, compare it against your incrementality testing, and be honest about the fact that correlation between ad exposure and conversion is not the same as causation. Vidyard’s analysis of why go-to-market execution feels harder than it used to points to measurement complexity as one of the reasons. View-through attribution, misconfigured, adds complexity without adding clarity.

Revenue-Per-Visitor and Engagement Quality Scoring

Not all engagement is equal, and most ad platforms do not make that distinction by default. Someone who clicks an ad, reads three pages, watches a product video, and returns two days later is not the same as someone who clicks, bounces in four seconds, and never comes back. But both show up as clicks in your campaign report.

Revenue-per-visitor is a metric worth calculating at the campaign level. It takes your total revenue from a campaign’s traffic and divides it by the number of visitors that campaign sent. This gives you a commercial value for each visitor, which is a more useful optimisation signal than cost-per-click or even cost-per-conversion when conversion quality varies.

Engagement quality scoring goes a step further. By combining behavioural signals, time on site, pages per session, scroll depth, video completion, return visits, into a composite score, you can segment your ad-driven traffic by quality and understand which campaigns are attracting buyers versus browsers. Some analytics platforms offer this natively. Others require custom event tracking and a bit of calculation.

The practical value is in the optimisation decisions it enables. When I grew iProspect from a team of 20 to over 100 people, one of the consistent themes in the accounts that performed best was a willingness to look beyond the headline conversion metric and ask whether the conversions being generated were actually worth having. Revenue-per-visitor and engagement quality scoring are the features that make that question answerable.

The Reporting Stack That Connects It All

Individual features matter less than how they connect. A multi-touch attribution model in your ad platform, revenue-linked conversion events in your CRM, cohort analysis in your BI tool, and incrementality testing running in the background are all more valuable together than any one of them in isolation.

The reporting stack that connects ad engagement to revenue impact typically involves three layers. The ad platform layer captures engagement and attributed conversions. The CRM layer holds the ground truth on revenue, deal stage, and customer lifetime value. The BI layer, whether that is Looker, Power BI, or a custom build, joins these data sources and surfaces the commercial picture that neither platform can show alone.

BCG’s work on go-to-market strategy in financial services makes a point that applies more broadly: the organisations that understand their customers’ commercial behaviour at a granular level consistently outperform those that rely on aggregated metrics. The reporting stack is how you build that granular understanding in a paid advertising context.

The goal is not a perfect measurement system. It does not exist. The goal is honest approximation: a view of performance that is directionally accurate, commercially grounded, and honest about its own limitations. That is more useful than a precise-looking dashboard built on the wrong inputs.

If you are building out your measurement approach as part of a broader growth strategy, the go-to-market and growth strategy hub covers the wider commercial context that measurement needs to serve. Tracking revenue impact from ad engagement is not a technical problem in isolation. It is a strategic question about what your business needs to know to grow.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.