Ad Testing Before Launch: What the Data Won’t Tell You

Ad testing market research is the process of exposing creative concepts, messaging, or finished ads to a defined audience before media spend commits, with the goal of predicting in-market performance. Done well, it reduces the risk of costly misfires and surfaces the kind of audience signal that briefing documents rarely capture. Done poorly, it produces a false sense of confidence and occasionally kills the best work in the room.

The gap between those two outcomes is almost never about methodology. It is about how you interpret what you get back.

Key Takeaways

- Ad testing research predicts directional risk, not guaranteed performance. Treat it as a probability filter, not a verdict.

- The most common failure in ad testing is optimising for recall and likeability when the actual business objective is conversion or consideration shift.

- Qualitative and quantitative methods answer different questions. Using only one gives you half the picture at best.

- Pre-testing is most valuable when it is embedded into the creative process early, not bolted on at the end as a sign-off exercise.

- The ads that test best in isolation are not always the ads that perform best in-market. Context, frequency, and placement all change the equation.

In This Article

- What Does Ad Testing Market Research Actually Cover?

- Why the Score Is Not the Point

- Qualitative vs Quantitative: Choosing the Right Tool

- Defining the Right Metrics Before You Test

- The Role of Competitive Context in Ad Testing

- When to Test and When to Just Run

- Connecting Ad Testing to Broader Research Strategy

- Practical Steps for Running Useful Ad Tests

I spent a period early in my career at lastminute.com, where the pace of campaign deployment was genuinely unlike anything I had experienced before. We launched a paid search campaign for a music festival and saw six figures of revenue within roughly 24 hours from what was, structurally, a fairly simple campaign. The speed was intoxicating. The lesson I took from it, though, was not about speed. It was about how quickly wrong assumptions get amplified at scale. When volume is low, a bad message costs you a few hundred pounds. When you are running at that kind of velocity, a bad message costs you a multiple of that before anyone notices. Testing is not bureaucracy. It is risk management.

What Does Ad Testing Market Research Actually Cover?

Ad testing sits under a broader umbrella of market research disciplines. If you want to understand the full landscape, the Market Research and Competitive Intelligence hub covers the range of methodologies and where each one earns its place in a planning cycle.

Within that landscape, ad testing specifically covers pre-launch evaluation of creative work. That can mean anything from rough concept boards tested in a focus group setting through to finished video ads run through a quantitative panel. The scope varies by budget, timeline, and what question you are actually trying to answer.

The main categories are these:

- Concept testing: Evaluating early-stage ideas before production spend is committed. Usually qualitative, often exploratory.

- Copy testing: Assessing finished or near-finished ads against defined metrics such as recall, message comprehension, brand attribution, and purchase intent.

- A/B and multivariate testing: Running variants in-market or in controlled panels to measure relative performance across specific variables.

- Emotional response testing: Using facial coding, biometric response, or implicit association tests to measure reactions that respondents cannot or will not articulate verbally.

Each method is suited to a different stage of the creative process and a different type of question. The mistake most marketing teams make is picking one method and applying it everywhere, usually the quantitative panel because it produces a score that feels objective and defensible in a meeting.

Why the Score Is Not the Point

I have judged the Effie Awards, which are specifically designed to recognise marketing effectiveness rather than creative craft. The work that wins is almost never the work that would have scored highest in a pre-test panel. Effie-winning campaigns are often built on a sharp, sometimes uncomfortable insight that takes time to land. They work because they are right, not because they are immediately liked.

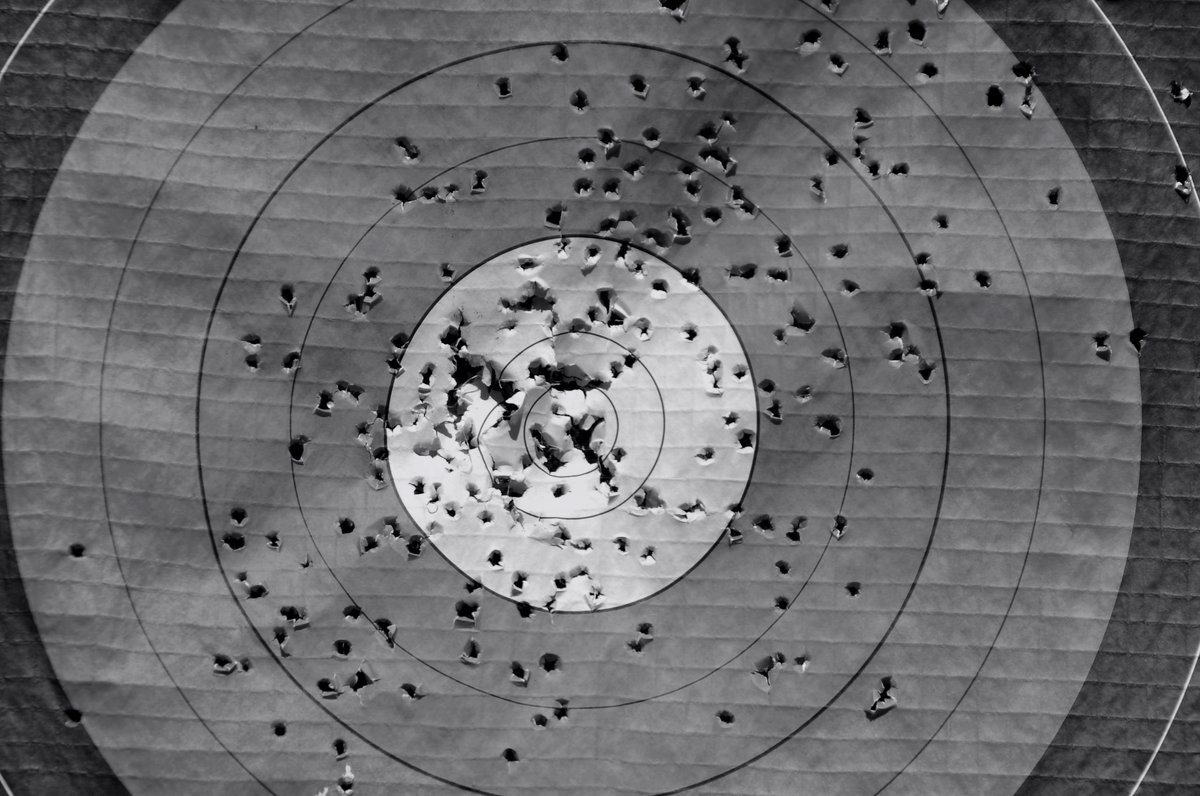

Pre-test scores measure immediate response in an artificial context. A respondent watching your ad on a laptop in a research panel environment, surrounded by competitor ads, with no emotional priming from the surrounding content, is not the same person who encounters your ad mid-scroll on a Tuesday evening after a long day. The environment shapes the response. The score does not capture that.

This does not mean pre-testing is useless. It means you need to know what it is actually measuring and weight your decisions accordingly. A low recall score on a concept board is a signal worth investigating. It is not a reason to kill the idea.

Platforms like Optimizely publish research on how testing environments and methodology choices affect outcomes. The consistent finding is that testing in isolation underestimates the effect of context on response. That is worth keeping in mind when you are presenting results to a client or a board.

Qualitative vs Quantitative: Choosing the Right Tool

This is where a lot of teams go wrong. Qualitative methods, including focus groups and in-depth interviews, are good at surfacing the why. They tell you what is confusing people, what language resonates, where the emotional connection is landing or breaking down. They are not good at telling you how many people feel that way.

Quantitative methods, including online panels and in-market split tests, are good at telling you what proportion of a defined audience responds in a particular way. They are not good at explaining why, or at capturing nuance that a respondent cannot put into words.

If you are testing a concept at an early stage, qualitative is almost always the right starting point. The focus group methodology has real limitations when it is misapplied, but for early-stage concept work it remains one of the most efficient ways to understand how an idea is landing before you spend money finishing it.

If you are validating a finished execution and need to make a go/no-go call with budget attached, quantitative gives you a more defensible basis for that decision. The two methods are complementary, not competing. Running both in sequence, qualitative to refine and quantitative to validate, is the approach that consistently produces better outcomes than either alone.

Defining the Right Metrics Before You Test

The single most common failure mode in ad testing is measuring the wrong thing. Teams default to recall and likeability because those metrics are easy to collect and easy to present. But if your campaign objective is consideration shift in a category where your brand is not top of mind, recall tells you almost nothing useful. If your objective is direct response conversion, likeability is largely irrelevant.

Before you design a test, you need to be clear on three things:

- What is the specific business objective this campaign is serving? Not the marketing objective. The business objective.

- What audience behaviour does the campaign need to change to achieve that objective?

- What does success look like in the test environment, as a proxy for that behaviour change?

That third question is the hard one. The honest answer is that most pre-test metrics are imperfect proxies. Purchase intent scores overstate actual intent. Recall scores conflate memory with relevance. Brand attribution scores are sensitive to the order in which stimuli are presented. None of this means you should not measure. It means you should be honest about what the measurement is and is not telling you.

This connects directly to how you define your target audience for the test. If you are working in B2B SaaS and your campaign is aimed at a specific buyer profile, testing with a broad consumer panel will produce noise. Understanding your ICP scoring criteria before you recruit your test sample is not optional. It is the difference between signal and irrelevant data.

The Role of Competitive Context in Ad Testing

Most ad testing is done in isolation. Your ad, your audience, your metrics. The problem is that ads do not run in isolation. They run in a competitive environment where your audience has already been exposed to messaging from your category, has formed associations with your competitors, and is making relative judgements whether you ask them to or not.

Building competitive context into your testing design is one of the most underused practices in the discipline. This can be as simple as including competitor ads in the stimulus set and measuring relative performance rather than absolute scores. It can also involve more sophisticated approaches that draw on search engine marketing intelligence to understand what messaging your competitors are already running at volume, so you can assess whether your creative is genuinely differentiated or whether it is saying something the category has already said to death.

I have reviewed campaigns that tested well in isolation and flatlined in market because the message was indistinguishable from three other brands running the same week. The test did not catch it because the test did not include those brands. Competitive context is not a nice-to-have. It is a validity condition.

There is also a category of intelligence that sits in less obvious places. Grey market research covers the kind of signal that does not come from formal research panels or commissioned studies, including social listening, community forums, and informal audience behaviour. For ad testing, this kind of source can surface the language your audience actually uses to describe their problem, which is often very different from the language your brief assumed they would use.

When to Test and When to Just Run

Not everything needs a pre-test. The decision about whether to test should be proportional to the stakes involved: the size of the media budget, the reversibility of the creative decision, and the cost of being wrong.

A tactical social post does not need a research programme behind it. A brand campaign that is going to anchor your positioning for the next 18 months probably does. A performance creative variant that you can swap out in 48 hours if the data looks wrong is a different risk profile from a TV execution that takes six weeks and six figures to produce.

The framework I use is simple. If the cost of being wrong is higher than the cost of the research, test. If the cost of being wrong is recoverable quickly from in-market data, the research budget is better spent elsewhere. This is not a sophisticated model. It is a proportionality check that most teams skip because they either over-test everything or test nothing.

For digital ad copy specifically, the in-market test is often the most efficient research method available. Search Engine Journal’s analysis of Google ad copy makes the case that copy performance is highly sensitive to query context in ways that panel testing cannot replicate. For paid search, running controlled variants with statistically meaningful impression volumes is frequently more informative than a pre-launch panel study.

Connecting Ad Testing to Broader Research Strategy

Ad testing does not exist in isolation from the rest of your research programme. The most effective testing setups I have seen are those where the pre-test is connected upstream to audience research and downstream to in-market measurement, so that you are building a continuous loop rather than a one-off event.

That upstream connection matters particularly when you are operating in a market where audience pain points are not well understood. Pain point research done before the creative brief is written changes the quality of the brief fundamentally. If you know what your audience is actually frustrated by, as opposed to what your account team assumes they are frustrated by, your creative starts from a more honest place. And work that starts from an honest place tends to test better and perform better, because it is saying something true rather than something assumed.

The downstream connection is measurement. Pre-test scores are hypotheses. In-market data is evidence. The discipline is in tracking whether your hypotheses were right and updating your testing models when they were not. Most organisations do not close that loop. They pre-test, they run, they look at campaign performance, and they move on. The learning that would improve the next test never gets captured.

This is also where the honest use of data matters most. I have seen organisations selectively report pre-test results, surfacing the metrics that supported the creative they wanted to run and burying the ones that did not. That is not research. It is confirmation bias with a research budget attached. The value of testing is that it tells you things you did not know or did not want to hear. If you are only listening to the parts you like, you have wasted the money.

Practical Steps for Running Useful Ad Tests

None of this requires a large research budget or a specialist team. The fundamentals are accessible to any marketing function that is willing to be disciplined about how it sets up and interprets the work.

Start with the question, not the method. Write down the specific decision this research needs to inform. If you cannot write that down in one sentence, you are not ready to design the test.

Recruit to your actual audience. This sounds obvious. It is routinely ignored. A general consumer panel is not your target audience unless your target audience is genuinely the general consumer population. If you are selling to a specific segment, recruit that segment, even if it costs more and takes longer.

Include competitive stimulus. Do not test your ad in a vacuum. Include category context. Your audience will provide more realistic responses when they are making relative judgements rather than absolute ones.

Measure what connects to your objective. If your objective is consideration, measure consideration shift. If your objective is conversion, measure purchase intent as a proxy, but be honest about the gap between stated intent and actual behaviour.

Close the loop. Track your pre-test predictions against in-market outcomes. Build a record of where your testing model was right and where it was wrong. Over time, that record is more valuable than any single test result.

The broader point is that ad testing, like all research, is only as useful as the quality of thinking you bring to it. A well-designed test run by a team that understands its limitations will outperform an expensive proprietary methodology run by a team that treats the score as gospel. The tool is not the skill. The skill is in knowing what you are looking for and being honest about what you find.

For a broader view of how ad testing fits into a full market research and intelligence strategy, the Market Research and Competitive Intelligence hub covers the methodologies, frameworks, and decision criteria that sit around it.

The point I keep coming back to, after 20 years of watching campaigns succeed and fail, is that the organisations that get the most from ad testing are not the ones with the biggest research budgets. They are the ones that treat research as a conversation with their audience rather than a sign-off mechanism for decisions they have already made. That shift in posture changes everything about how useful the work becomes.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.