Analytics Leadership: What Separates Data-Driven Teams From Data-Buried Ones

Analytics leadership is the organisational capability to turn measurement into decisions, not just reports. Most marketing teams have more data than they can act on, and the gap between collecting it and using it well is where commercial performance is won or lost.

The teams that get this right share a common trait: someone is responsible for the quality of the thinking, not just the quality of the dashboards. That distinction matters more than the tools you use or the volume of data you collect.

Key Takeaways

- Analytics leadership is an organisational capability, not a job title. It requires someone accountable for the quality of decisions, not just the accuracy of reports.

- Most marketing teams are data-rich and insight-poor. The bottleneck is rarely access to data, it is the discipline to ask better questions of it.

- A dashboard that nobody acts on is a liability, not an asset. Every metric on a live report should have a named owner and a clear decision attached to it.

- The best analytics cultures treat tools as a perspective on reality, not reality itself. GA4, attribution models, and MMM outputs are approximations, not ground truth.

- Analytics leadership scales when it is embedded into commercial conversations, not siloed in a reporting function that presents after the decisions have already been made.

In This Article

- Why Most Marketing Teams Are Data-Rich and Insight-Poor

- What Analytics Leadership Actually Means in Practice

- The Dashboard Problem Nobody Talks About

- How to Structure Accountability Around Analytics

- The Question of Tool Dependency

- Building a Culture Where Data Gets Challenged

- Where Analytics Leadership Connects to Commercial Performance

- The Organisational Signals That Analytics Leadership Is Missing

- What Good Analytics Leadership Looks Like at Different Scales

- The Practical Steps That Actually Move the Needle

Why Most Marketing Teams Are Data-Rich and Insight-Poor

When I was running agency teams at scale, one of the most common problems I saw with incoming clients was not a lack of data. It was the opposite. They had GA4 configured, dashboards built, monthly reports landing in inboxes, and attribution models running. What they did not have was a clear line between any of that and a commercial decision.

The reports were thorough. The analysis was often technically sound. But nobody could tell me what had changed in the business as a result of reading them. The data was being produced, not used.

This is the analytics leadership problem in its clearest form. It is not a technology failure. It is a governance failure. Someone needs to own the question of whether the measurement is actually driving better choices, and in most organisations, that accountability does not exist clearly enough to matter.

If you want a broader grounding in how analytics fits into modern marketing strategy, the Marketing Analytics and GA4 hub covers the full landscape, from measurement frameworks to platform-specific guidance.

What Analytics Leadership Actually Means in Practice

The phrase gets used loosely. In job descriptions, it usually means someone who can manage a team of analysts or present data to senior stakeholders. That is not wrong, but it is incomplete.

Real analytics leadership means setting the standard for how data is interpreted, challenged, and used across the marketing function. It means being the person in the room who asks whether the metric actually reflects the outcome the business cares about, or whether it is a proxy that has become disconnected from reality through habit.

I have judged at the Effie Awards, which is one of the few places in marketing where effectiveness evidence is actually scrutinised rather than celebrated at face value. What separates the entries that hold up from the ones that fall apart under questioning is almost always the quality of the thinking behind the measurement, not the sophistication of the tools used to produce it. Teams that win are the ones where someone has genuinely interrogated whether the data supports the claim being made.

That interrogation is analytics leadership. It is a discipline, not a software licence.

The Dashboard Problem Nobody Talks About

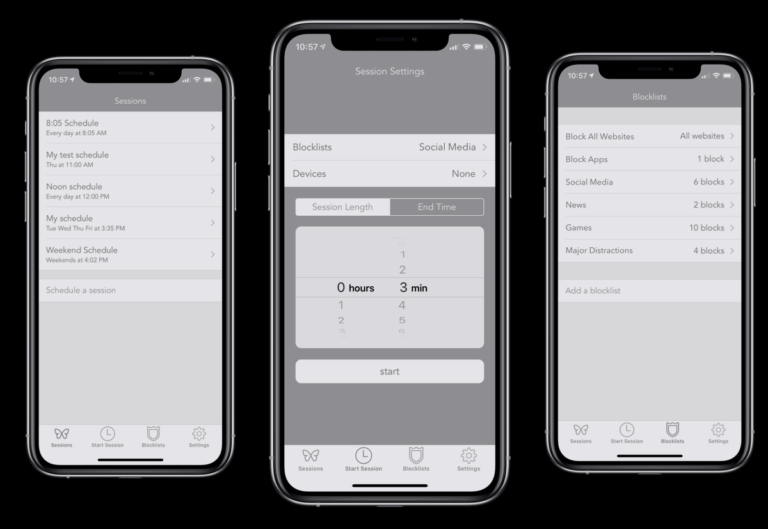

Dashboards have become the default output of analytics work, and in many organisations they have also become the end point. The dashboard is built, shared, and then checked periodically to confirm that numbers are moving in the right direction. If they are, nothing changes. If they are not, the conversation usually starts with the data rather than the strategy.

There is a practical way to audit this. Look at your current live dashboards and ask two questions for every metric displayed: who owns this number, and what decision does it inform? If you cannot answer both questions confidently for a given metric, it should not be on the dashboard. It is occupying attention without earning it.

Building a dashboard that actually drives decisions requires a different starting point. Crazy Egg’s guide to building GA dashboards is a useful reference for the structural thinking involved, particularly around choosing metrics that connect to outcomes rather than activity. The principle applies whether you are working in GA4, a BI tool, or a custom reporting setup.

The teams I have seen do this well share one habit: they define the decision before they define the metric. They start with what they need to know to make a better choice, and then they work backwards to the data that would inform it. Most teams do the reverse. They look at the data they have and try to find decisions that fit.

How to Structure Accountability Around Analytics

One of the structural changes that made the biggest difference when I was scaling agency teams was separating reporting accountability from analytical accountability. These are different jobs and they require different skills. Conflating them is one of the main reasons analytics functions underdeliver.

Reporting accountability means the data is accurate, timely, and consistently formatted. It is important, but it is table stakes. Analytical accountability means someone is responsible for the quality of the interpretation, for challenging whether the numbers are telling the right story, and for connecting the findings to a commercial recommendation.

When these are the same role, the reporting tends to crowd out the analysis. There is always another dashboard to update, another weekly deck to prepare, another data pull to run. The thinking gets squeezed out by the production.

Separating them, even informally in smaller teams, creates space for the harder work. It also creates a clearer escalation path when the data is ambiguous or when different metrics are pointing in different directions, which is more often than most reporting frameworks acknowledge.

The Question of Tool Dependency

There is a version of analytics leadership that is essentially tool expertise dressed up as strategic thinking. The analyst knows GA4 deeply, can configure custom events, build Looker Studio reports, and troubleshoot tracking issues. These are genuinely valuable skills. They are not, on their own, analytics leadership.

The risk of over-indexing on tool expertise is that it creates a kind of measurement parochialism. The tool becomes the frame through which all questions are filtered. If GA4 cannot measure it, it does not get measured. If the attribution model says channel X is underperforming, the conversation stops there rather than asking whether the attribution model is the right instrument for the question being asked.

It is worth knowing what alternatives exist and what they are better suited to. Moz has a useful overview of GA alternatives that is worth reading not because you should necessarily switch, but because understanding the landscape helps you use whatever tools you have with more critical awareness of their limitations.

I have always found it useful to think of any analytics platform as a perspective on reality rather than reality itself. GA4 shows you a version of user behaviour filtered through its own data model, sampling logic, and attribution rules. That version is useful. It is not complete. Analytics leaders hold that distinction clearly, even when it is inconvenient.

Building a Culture Where Data Gets Challenged

Early in my career, I worked in an environment where the data was treated as authoritative by default. If the numbers said something was working, it was working. If they said something was not, the conversation moved on. The idea that the measurement itself might be flawed was not a comfortable one to raise, particularly if the numbers were telling a story that suited someone’s agenda.

That culture produces a particular kind of analytical failure: the kind where everyone is looking at the same dashboard and nobody is asking whether it is measuring the right things. It is not dishonesty. It is the absence of permission to challenge.

Analytics leadership creates that permission. It models the behaviour of questioning data, not to be difficult, but because honest approximation is more useful than false precision. A number that is directionally right and clearly understood is worth more than a number that is precisely wrong but confidently presented.

Practically, this means building review processes where the question “is this the right way to measure this?” is a standing item, not an occasional disruption. It means rewarding the analyst who flags a tracking problem that invalidates three months of data, rather than treating them as the bearer of bad news. And it means being willing to present ambiguous findings to senior stakeholders rather than forcing clarity that the data does not actually support.

Where Analytics Leadership Connects to Commercial Performance

The clearest test of whether analytics leadership is working is whether it changes behaviour at a commercial level. Not whether the reports are better, or whether the dashboards are cleaner, or whether the team has adopted a new tool. Whether decisions are different, and whether those different decisions are producing better outcomes.

One of the sharpest moments I had on this came from a paid search campaign I ran at lastminute.com for a music festival. The campaign itself was not particularly sophisticated. But the measurement was tight enough that we could see revenue impact in near real-time, which meant we could make decisions about budget allocation within hours rather than weeks. The analytical infrastructure was what made the commercial agility possible. Without it, the campaign would have run to plan regardless of what the data was showing.

That is the commercial case for analytics leadership. It is not about producing better reports. It is about compressing the time between signal and response. Teams that can read what is happening and act on it faster than their competitors have a structural advantage that compounds over time.

Integrating behavioural data alongside platform analytics is part of how leading teams build that speed. Hotjar’s integration with Google Analytics is one example of how session-level behaviour can add context to aggregate metrics, giving analysts a richer picture to work from when making time-sensitive decisions.

The Organisational Signals That Analytics Leadership Is Missing

There are some reliable indicators that an organisation lacks genuine analytics leadership, regardless of how sophisticated its tooling appears.

The first is when analytics is a retrospective function. The team produces reports about what happened last month, but is not involved in shaping what happens next month. The data arrives after the decisions have been made, which means it can confirm or confound but cannot actually inform.

The second is when different teams are running different attribution models and nobody has reconciled them. Finance is looking at last-click. The media team is running a data-driven model. The brand team is not using attribution at all. Each team is optimising for a different version of performance, and nobody has the authority or the mandate to align them.

The third is when the response to a bad month is to question the strategy rather than the measurement. Sometimes the strategy is wrong. But sometimes the measurement is wrong, and the strategy is fine. Analytics leadership means being able to distinguish between the two before committing to a change.

Understanding how to extract meaningful signals from within individual platforms is part of the foundation. SEMrush’s breakdown of keyword data in Google Analytics is a useful example of how platform-specific analysis can surface insights that inform broader strategic decisions, when it is connected to the right questions.

The fourth signal is when the analytics team and the marketing team are in separate conversations. Analytics presents findings. Marketing presents plans. The two rarely intersect in a way that changes either. That separation is a structural problem, and it does not resolve itself through better tooling.

What Good Analytics Leadership Looks Like at Different Scales

The shape of analytics leadership changes depending on the size and complexity of the organisation, but the core requirements stay consistent.

In a small team, analytics leadership often sits with the most commercially curious person in the room, regardless of their job title. It is the performance marketer who asks whether the conversion rate improvement actually changed revenue, or whether it just shifted the mix. It is the founder who refuses to celebrate a traffic increase until they understand where it came from and whether it converted.

In a mid-size organisation, it typically requires a designated function with a clear mandate. Not just an analytics team that produces reports, but a team that has a seat at the table when strategy is being set and is expected to challenge assumptions rather than validate them. Unbounce’s thinking on simplifying marketing analytics is worth reading here, particularly for teams that are trying to build analytical rigour without drowning in complexity.

In a large enterprise, analytics leadership becomes a governance question as much as a skills question. Who has the authority to set measurement standards across business units? Who arbitrates when different teams are using different methodologies? Who is responsible for the integrity of the data that feeds into board-level reporting? These are not technical questions. They are organisational ones, and they require someone with enough seniority and credibility to hold the line.

The social and mobile dimensions of analytics add another layer of complexity at every scale. Crazy Egg’s overview of GA for social and mobile covers some of the practical measurement challenges that analytics leaders need to account for when building a coherent cross-channel picture.

The Practical Steps That Actually Move the Needle

If I were advising a marketing director who wanted to build stronger analytics leadership in their organisation, I would start with three things that do not require new tools or additional headcount.

First, audit every live dashboard against the decision it informs. Remove anything that does not have a clear owner and a clear decision attached to it. This will be uncomfortable. It will also immediately clarify where the analytical energy should be focused.

Second, introduce a standing question in every marketing review: what would change our interpretation of this data? This forces the team to think about the assumptions embedded in their measurement and to identify the conditions under which the story they are telling would be wrong. It is a small habit with a significant effect on analytical culture over time.

Third, make sure analytics is present at the beginning of campaign planning, not just at the end. The measurement framework should be agreed before the campaign launches, including what success looks like, what the counterfactual is, and how you will distinguish between the campaign working and other factors moving in your favour. This is basic experimental discipline, and it is rarer than it should be.

For teams building out their UTM discipline as part of this process, SEMrush’s guide to UTM tracking codes is a solid practical reference. Clean campaign tagging is one of the lowest-cost, highest-return investments in data integrity that any team can make.

If you want to go deeper on the frameworks and tools that support this kind of analytical rigour, the Marketing Analytics and GA4 hub is the right place to continue. It covers the full range of measurement approaches, from attribution to MMM to platform-specific configuration, all through the same commercially grounded lens.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.