Analytics Operating Model: Why Most Teams Have the Tools But Not the System

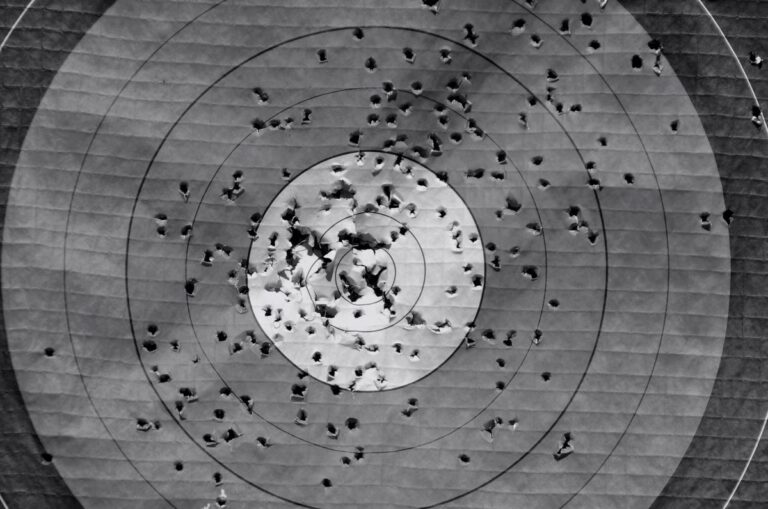

An analytics operating model is the framework that defines how your organisation collects, interprets, and acts on marketing data. It covers who owns what, how data flows between tools and teams, what gets measured and why, and how insights connect to commercial decisions. Without one, even the most sophisticated analytics stack produces noise rather than signal.

Most marketing teams have invested heavily in tools. Fewer have invested in the system that makes those tools useful. That gap is where analytics capability goes to die.

Key Takeaways

- An analytics operating model defines ownership, data flow, measurement priorities, and how insight connects to decisions , not just which tools you use.

- Tool proliferation without governance creates conflicting numbers, eroded trust, and analysis paralysis across teams.

- The most common failure point is not data quality , it is the absence of a clear decision-making process built around the data.

- Effective models assign named accountability for data integrity, not just data access.

- A working analytics operating model is not built once , it requires regular calibration as business priorities and data sources evolve.

In This Article

- What Does an Analytics Operating Model Actually Include?

- Why Tool Investment Alone Does Not Solve the Problem

- The Four Layers of a Functional Analytics Operating Model

- Common Failure Modes and What Causes Them

- Who Should Own the Analytics Operating Model?

- How to Audit Your Current Analytics Operating Model

- Building the Model Without Starting From Scratch

I have spent most of my career watching this problem from the inside. At iProspect, when I was building the team from around 20 people to over 100, one of the hardest things to scale was not the headcount or the client base , it was the coherence of how we measured performance. Every new client brought new data sources, new attribution preferences, and new definitions of what “conversion” meant. Without a shared operating model, every account became its own measurement island. Decisions slowed down. Reporting became a negotiation rather than a foundation.

What Does an Analytics Operating Model Actually Include?

The phrase sounds more abstract than it is. Strip it back and an analytics operating model is really an answer to five practical questions: What are we measuring? Who owns the data? How does it flow between systems? Who interprets it? And how does interpretation become action?

Most teams can answer the first question reasonably well. They have KPIs. They have dashboards. They have GA4 installed and a reporting cadence. The breakdowns tend to happen on questions two through five, where the work is less glamorous and the accountability is less clear.

Ownership is the first pressure point. When a discrepancy appears between what the CRM says and what GA4 says, who resolves it? If the answer is “whoever noticed it” or “we raise it in the next weekly,” you do not have an operating model , you have a loosely connected set of tools and a hope that someone will care enough to investigate. If you are building out your analytics infrastructure, the broader marketing analytics hub covers the full landscape of measurement, attribution, and tooling decisions worth thinking through alongside the operating model question.

Data flow is the second pressure point. Tools do not naturally talk to each other in ways that are commercially meaningful. UTM parameters need to be consistent across campaigns for source attribution to make sense. Video engagement data from platforms like Wistia needs to be connected to GA4 deliberately, not assumed. Behavioural data from heatmap and session tools needs to be interpreted alongside quantitative traffic data rather than in isolation. None of this happens automatically. It requires design decisions made by someone with both technical understanding and commercial context.

Why Tool Investment Alone Does Not Solve the Problem

There is a pattern I have seen repeat across clients and agency relationships for two decades. A business decides its analytics are not good enough. It buys more tools. The reporting becomes more complex. The number of dashboards multiplies. The number of people who trust any of them drops. Six months later, the conversation starts again.

The tools are not the problem. The problem is that tools answer questions , they do not ask them. Without a framework that defines which questions matter commercially, a team with access to GA4, a CRM, a heatmap tool, a paid media platform, and a BI layer will produce a lot of data and very little clarity.

BCG’s research into data and analytics maturity in financial institutions identified a consistent finding that applies well beyond that sector: organisations that get sustained value from analytics invest in the operating model around the data, not just the data infrastructure itself. The capability gap is almost never technical. It is structural and organisational.

I remember a client conversation from my agency days where the marketing director walked me through their analytics setup with obvious pride. They had everything. Multiple platforms, a custom dashboard, weekly automated reports going to twelve people. When I asked what decision had been changed in the last quarter as a result of the data, there was a long pause. The honest answer was: none. The data was being collected and distributed. It was not being used.

The Four Layers of a Functional Analytics Operating Model

A model that actually works tends to operate across four distinct layers. Each one is necessary. Missing any of them creates a specific, predictable failure mode.

Layer 1: Data Collection and Integrity

This is the foundation. It covers how data enters your ecosystem, how clean it is, and how consistently it is structured. UTM tracking discipline sits here. Consistent UTM parameter conventions are not a technical nicety , they are the difference between knowing which campaigns drove revenue and guessing. GA4 filter configuration sits here too. Filtering out internal traffic and bot traffic is basic hygiene, but it is routinely skipped or misconfigured in ways that corrupt the data everyone downstream relies on.

Ownership at this layer means someone specific is accountable for data integrity. Not “the analytics team” generically. A named person who audits the setup, catches drift when tracking breaks, and maintains documentation of what is being measured and why.

Layer 2: Integration and Flow

Data collected in isolation is only partially useful. The second layer defines how data moves between systems and what connections are maintained. This includes how your website analytics connect to your CRM, how your paid media platforms feed into your reporting layer, and how behavioural tools like session recording and heatmaps sit alongside quantitative analytics.

Tools like Hotjar integrated with Google Analytics can surface the qualitative context behind quantitative patterns. A page with high traffic and low conversion looks different when you can see where users are dropping off behaviourally. But that integration needs to be set up and interpreted deliberately. The data does not interpret itself.

The failure mode at this layer is siloed interpretation. Paid media teams looking only at platform data. SEO teams looking only at organic traffic. No one looking at the full picture of how a customer actually moved from awareness to conversion. An operating model defines who is responsible for cross-channel coherence, not just channel-level performance.

Layer 3: Interpretation and Insight

This is where most operating models have the biggest gap. Collecting and connecting data is hard. Interpreting it well is harder. It requires commercial context, not just analytical skill.

When I was managing paid search campaigns at lastminute.com, we could see performance data almost in real time. A campaign I ran for a music festival generated six figures of revenue within roughly a day of going live. That speed of feedback was exhilarating, but it also created a trap: the temptation to optimise constantly based on short-term signals rather than stepping back to ask what the data was actually telling us about customer behaviour at a structural level. Real interpretation requires distance as well as proximity to the numbers.

The operating model at this layer defines who has the mandate to interpret data and draw conclusions, not just report numbers. It distinguishes between reporting (what happened) and analysis (why it happened and what it means). Those are different skills and often different roles. Conflating them is one of the most common reasons analytics functions underdeliver commercially.

Layer 4: Decision and Action

The final layer is where analytics either earns its place or becomes an expensive reporting exercise. This layer defines how insight connects to commercial decisions: which decisions require data sign-off, which can be made on directional evidence, and how quickly the organisation can move from insight to action.

Slow decision cycles erode the value of good analytics. If a team identifies a clear opportunity in the data but it takes three weeks to get budget approval and another two to implement the change, the window may have closed. Speed of decision is a design choice, not an accident. The operating model should define it explicitly.

Common Failure Modes and What Causes Them

Having seen analytics functions at dozens of organisations, the failure modes cluster into a recognisable set. Naming them is useful because they tend to be misdiagnosed. Teams assume the problem is the tool when it is almost always the model around the tool.

Conflicting numbers. Two teams look at the same period and report different revenue figures. This is almost always a data integrity or integration problem at Layer 1 or 2. The symptom looks like a technical issue. The cause is usually the absence of a single source of truth and a named owner responsible for maintaining it.

Dashboard proliferation. Every team builds its own view. Nobody trusts anyone else’s. Senior stakeholders stop asking for data because they cannot tell which dashboard to believe. This is a governance failure. An operating model defines which dashboards are authoritative and who maintains them.

Analysis paralysis. The team has access to everything and decides nothing. More data creates more uncertainty rather than less. This is a Layer 3 failure. Without a clear framework for what questions the data is being asked to answer, more data just creates more noise. Simplifying what you measure and why is not a step backward , it is often what makes analytics commercially useful for the first time.

Reporting without action. The weekly report goes out. Everyone nods. Nothing changes. This is a Layer 4 failure. Reporting and decision-making have been disconnected. The operating model needs to define explicitly which metrics trigger which decisions, and who has the authority to make them.

Who Should Own the Analytics Operating Model?

This question generates more internal politics than it should. In practice, the answer depends on the size and structure of the organisation, but the principle is consistent: the operating model should be owned by someone with both commercial accountability and sufficient technical credibility to be taken seriously by the people who maintain the data infrastructure.

In smaller organisations, that is often the head of marketing or a senior performance marketer who has grown into the role. In larger organisations, it may sit with a dedicated analytics or data function, but with a clear mandate from marketing leadership rather than existing as a purely technical service.

What does not work is shared ownership without clear accountability. “Everyone owns the data” is the same as no one owning it. When the numbers break, someone needs to be responsible for fixing them and for understanding why they broke. That requires a named owner, not a committee.

One of the better decisions I made when scaling an analytics function was separating the role of data steward from the role of analyst. The steward was responsible for the integrity and consistency of the data. The analyst was responsible for interpreting it and connecting it to commercial questions. Both roles existed in the same team, but the distinction meant that when something looked wrong, we knew exactly who to go to and what their job was.

How to Audit Your Current Analytics Operating Model

If you want to understand where your current model is working and where it is not, the fastest diagnostic is not a technical audit. It is a set of conversations.

Ask your team: if a key metric moved significantly this week, who would know first? Who would be responsible for understanding why? Who would make the decision about what to do? If those three questions produce three different answers that do not connect to each other, you have a gap in your operating model.

Then ask: what was the last commercial decision that was materially changed by something you saw in the data? If people struggle to answer, the issue is not data quality. The issue is that the data is not connected to decision-making in a meaningful way.

A more structured audit would look at each of the four layers: data collection integrity (are UTMs consistent, is internal traffic filtered, are key events tracked correctly), integration and flow (are systems connected, is there a single source of truth for revenue), interpretation (is there a clear process for moving from report to analysis), and decision (is there a defined path from insight to action with named owners and timelines).

Tools like web analytics platforms with expanded feature sets can surface new dimensions of user behaviour, but they only add value if the operating model around them is sound. Adding capability to a broken model makes the model more complex, not more effective.

Building the Model Without Starting From Scratch

Most teams cannot afford to pause everything and redesign their analytics infrastructure from the ground up. Nor should they. The operating model can be built incrementally, starting with the highest-value gaps.

Start with ownership. Assign named accountability for data integrity and for the connection between insight and decision. This costs nothing and changes the accountability dynamic immediately.

Then establish a single source of truth for your most commercially critical metric. Revenue, leads, or whatever matters most to the business. Get everyone aligned on one number from one place. That alone removes a significant amount of the noise that slows decisions down.

Then work outward from there. Fix the data collection issues that are corrupting the single source of truth. Build the integrations that give context to the primary metric. Establish the interpretation process that connects the data to commercial questions. Define the decision triggers that turn insight into action.

This is not a six-month project. Some of it can be done in weeks. The early wins build the credibility and the internal momentum to tackle the harder structural questions.

If you are working through the broader question of how analytics fits into your marketing function, the marketing analytics section of The Marketing Juice covers measurement frameworks, tooling decisions, and attribution models in more depth , all with the same commercially grounded perspective rather than the tool-first thinking that tends to dominate the space.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.