Conversion Optimized: Stop Measuring Lifts, Start Measuring Revenue

A conversion-optimized website is one where every significant element, from headline to call-to-action, has been tested, refined, and validated against real user behaviour rather than internal assumptions. It does not mean a site with a high conversion rate. It means a site where the conversion rate reflects deliberate decisions, not accidents.

The distinction matters more than most teams realise. I have reviewed performance reports from agencies claiming conversion optimization success while the underlying revenue numbers told a different story entirely. A lift in micro-conversions is not the same as a lift in commercial output, and confusing the two is one of the most persistent problems in CRO practice today.

Key Takeaways

- A conversion-optimized site is defined by deliberate, validated decisions, not by a single headline conversion rate metric.

- Optimizing for the wrong conversion event is one of the most common and commercially damaging mistakes in CRO programs.

- Revenue per visitor is a more honest success metric than conversion rate alone, because it accounts for offer quality, pricing, and downstream value.

- Most CRO programs stall because they optimize the easy things rather than the high-leverage ones. Testing button colour is not a strategy.

- The teams that get conversion optimization right treat it as an ongoing commercial discipline, not a one-time project or a tool vendor’s feature set.

In This Article

- What Does “Conversion Optimized” Actually Mean in Practice?

- Why Conversion Rate Is a Misleading Primary Metric

- The Conversion Event Problem: Optimizing for the Wrong Thing

- The Elements That Actually Move Commercial Conversion

- Testing Discipline: Where Most Programs Fall Apart

- Personalization as a Conversion Lever: The Honest Version

- Building a Conversion Optimization Program That Sustains Itself

- The Organizational Conditions That Make CRO Work

What Does “Conversion Optimized” Actually Mean in Practice?

The phrase gets used loosely. An agency will describe a landing page as “conversion optimized” because it has a short form, a hero image, and a green CTA button. A tool vendor will call a template “conversion optimized” because it was built using design conventions that performed well in aggregate across thousands of other sites. Neither of these is wrong exactly, but neither is particularly meaningful either.

In practice, conversion optimized means something more specific: the page, flow, or experience has been systematically evaluated against your audience, your offer, and your commercial goal. Not someone else’s audience. Not a generalised benchmark. Yours.

When I was running iProspect, we inherited a client whose lead generation landing pages had been “optimized” by the previous agency. The pages looked clean. The form was short. The CTA was prominent. Conversion rate was sitting at around 4%, which the previous team had presented as a win against industry average. What nobody had checked was lead quality. The sales team was closing fewer than 3% of those leads. The page was optimized for volume, not for the right kind of volume. We restructured the qualification layer in the form, conversion rate dropped to 2.8%, and the close rate tripled. Revenue went up. That is what conversion optimized actually looks like.

If you want a broader grounding in how CRO fits into performance strategy, the conversion optimization hub on The Marketing Juice covers the full landscape, from testing methodology to commercial measurement frameworks.

Why Conversion Rate Is a Misleading Primary Metric

Conversion rate is easy to measure and easy to move. That is precisely why it attracts so much attention and why it misleads so many teams.

The number is a ratio: conversions divided by visitors. You can improve it by increasing conversions or by reducing the number of low-intent visitors reaching the page. Both approaches show up as a “higher conversion rate” in your dashboard. Only one of them is actually growing your business.

I have seen this play out with paid search campaigns more times than I can count. A team narrows audience targeting aggressively, filters out broad-match traffic, and tightens the funnel. Conversion rate climbs. The account manager presents this as a CRO win. What they have done is reduce reach and increase selection bias in the traffic pool. The page itself has not improved at all. The metric has moved because the denominator changed, not because the experience got better.

Revenue per visitor is a more honest metric. It combines conversion rate with average order value and accounts for the fact that a 5% conversion rate on a £20 product is not the same commercial outcome as a 2% conversion rate on a £200 product. If you are running a CRO program and you are not tracking revenue per visitor alongside conversion rate, you are measuring effort, not outcome.

This is not a novel observation. The Moz Whiteboard Friday on CRO misconceptions makes a similar point about the gap between what teams measure and what actually drives commercial value. The problem is not that people do not know this. It is that dashboards default to conversion rate and nobody challenges the default.

The Conversion Event Problem: Optimizing for the Wrong Thing

Most CRO programs define their conversion event early and rarely revisit it. This is a structural problem. The conversion event you choose to optimize for shapes every test you run, every hypothesis you form, and every conclusion you draw. If the event is wrong, the entire program is wrong, regardless of how rigorous the methodology is.

Common examples of misaligned conversion events:

- Optimizing for form submissions when the business cares about qualified appointments

- Optimizing for trial sign-ups when the business cares about paid conversions from trial

- Optimizing for email captures when the email list has never been shown to convert to revenue

- Optimizing for add-to-cart rate when cart abandonment is the actual problem

- Optimizing for page engagement metrics when the goal is purchase

Each of these is a real pattern I have encountered in client work. The form submission example came from a B2B technology client where the sales team had a strict definition of a qualified lead that bore almost no resemblance to what the marketing team was optimizing the form to capture. The marketing team had been running CRO tests for eight months. They had increased form submission rate by 22%. The sales team’s pipeline had not moved.

The fix is not complicated but it requires a conversation that most marketing teams avoid: sitting down with whoever owns the downstream commercial outcome and agreeing on what a real conversion looks like. Not a proxy. Not a leading indicator. The actual event that connects to revenue.

For e-commerce, this is usually simpler. The purchase is the event. But even here, teams optimize for first-purchase conversion rate without accounting for return rate, customer lifetime value, or the difference in downstream value between product categories. A conversion optimized checkout flow that drives high volumes of low-value, high-return transactions is not a commercial success, regardless of what the conversion rate dashboard says.

The Elements That Actually Move Commercial Conversion

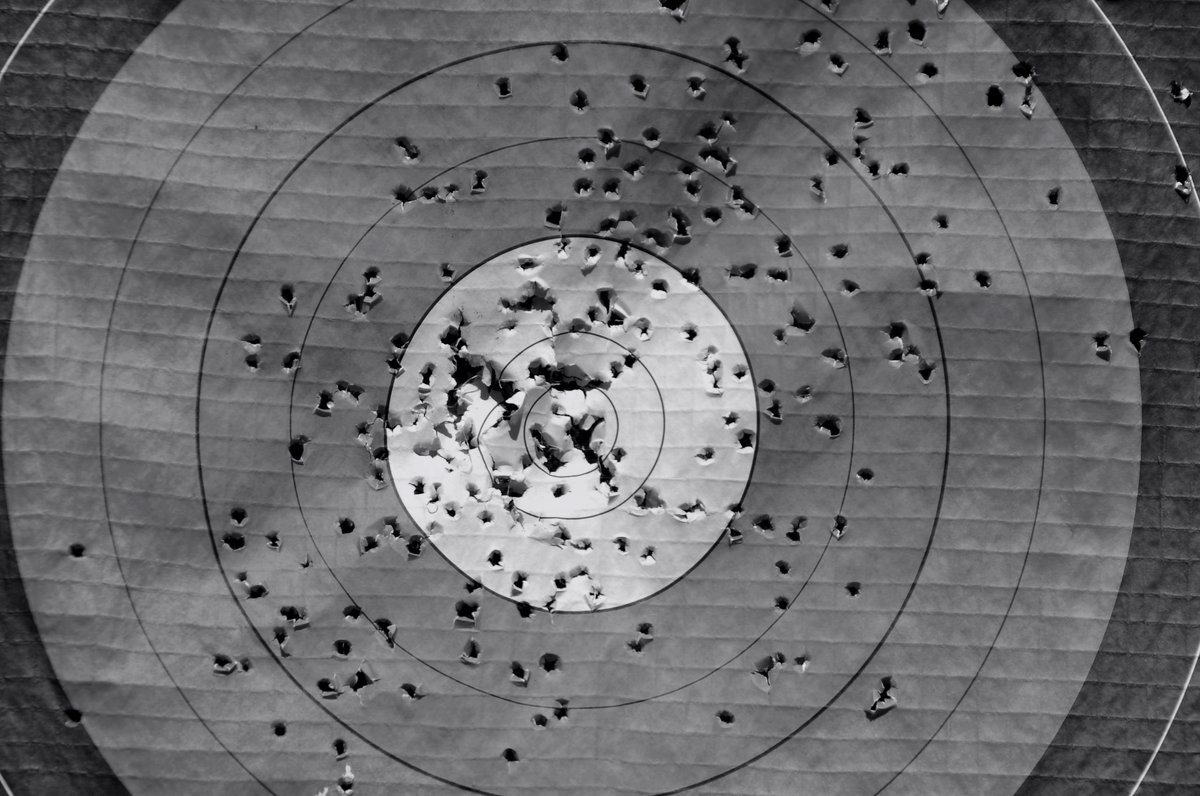

There is a hierarchy of impact in conversion optimization that most programs invert. Teams spend time on elements that are easy to test and easy to change, rather than on elements that have the most commercial leverage. The result is a lot of testing activity and very little revenue movement.

The elements with genuine commercial leverage, roughly in order of impact:

The Offer Itself

No amount of page optimization overcomes a weak offer. If the pricing is wrong, the trial terms are unattractive, or the value proposition does not resonate with the audience, the page will underperform regardless of how well it is designed. I have judged Effie Award entries where brands had invested heavily in conversion optimization while the fundamental offer had not been validated with the target audience. The optimization work was technically sound. The commercial outcome was poor. The offer was the problem.

Testing the offer, not just the page that presents it, is where the biggest conversion gains typically live. This means testing pricing structures, bundling, guarantee terms, and free trial versus freemium models. These tests are harder to run and the results are harder to interpret, which is exactly why most teams avoid them.

The Value Proposition and How It Is Communicated

The headline is the most tested element on most landing pages. It is also one of the highest-leverage elements, because it determines whether a visitor reads anything else. A headline that communicates a specific, credible benefit to a specific audience will outperform a generic headline almost every time. The Copyblogger analysis of the SEOmoz landing page contest, judged through live multivariate testing, remains one of the cleaner illustrations of how much headline framing moves conversion numbers.

The mistake teams make is treating headline testing as a copy exercise rather than a positioning exercise. The question is not “which words perform better?” It is “which version of our value proposition resonates most with this audience?” Those are different questions and they lead to different kinds of tests.

Friction in the Conversion Flow

Friction is anything that makes the conversion action harder than it needs to be. Long forms, multi-step checkouts with unclear progress indicators, payment methods that do not match audience preferences, account creation requirements before purchase, slow page load on mobile. These are all friction points and they have measurable impact on conversion rate.

The CRO checklist from Crazy Egg covers the structural friction points worth auditing systematically. It is a useful starting framework, though the specific impact of each element will vary significantly by audience and context. Do not treat any checklist as a substitute for understanding your own data.

Friction reduction is often where the quick wins live. But there is a ceiling. Once you have removed the obvious friction, further gains require addressing deeper problems: trust, relevance, and offer quality.