Copy Testing in Advertising: What Most Brands Skip

Copy testing is the process of evaluating advertising creative before, during, or after a campaign to determine how well it communicates, resonates, and drives the intended response. Done properly, it reduces the risk of expensive creative decisions being made on gut feel alone, and it gives marketing teams a defensible basis for the choices they make.

Most brands either skip it entirely or run it as a box-ticking exercise. Both are expensive habits.

Key Takeaways

- Copy testing is not about finding the “safe” ad. It is about reducing the cost of being wrong at scale.

- Pre-testing and post-testing serve different purposes. Conflating them leads to poor decisions at both stages.

- Most copy tests measure recall and comprehension. Fewer measure the thing that actually matters: commercial intent.

- Qualitative and quantitative copy testing answer different questions. You need both, not one or the other.

- The most dangerous copy test result is a false positive: creative that scores well in testing but fails in market.

In This Article

- Why Copy Testing Gets Treated as Optional

- What Copy Testing Actually Measures

- Pre-Testing vs Post-Testing: Two Different Jobs

- Qualitative vs Quantitative Methods: The False Choice

- The False Positive Problem

- Where Digital Has Changed the Game

- Building a Copy Testing Process That Actually Gets Used

- Copy Testing and the Broader Creative Strategy

Why Copy Testing Gets Treated as Optional

Early in my career I watched a client spend a significant portion of their annual TV budget on a campaign that had never been tested with a single real consumer. The creative team loved it. The account team loved it. The client signed it off after one internal presentation. It ran for six weeks and moved nothing. When the post-campaign analysis came back flat, everyone found a reason why the media plan was to blame.

That pattern repeats itself constantly across the industry. Copy testing gets treated as optional because it adds time, costs money, and occasionally produces results that nobody wants to hear. There is also a cultural problem: many creative teams treat testing as a threat to their craft rather than a service to the brief. Both instincts are understandable. Neither is commercially defensible.

The deeper issue is that most marketing teams do not have a clear framework for what they are testing, why they are testing it, or what they will do with the results. Copy testing without a decision framework is just expensive research that gets filed and forgotten.

What Copy Testing Actually Measures

There is a tendency to treat copy testing as a single activity. It is not. Different testing methods measure different things, and choosing the wrong method for the question you are trying to answer will give you data that is technically accurate and commercially useless.

The core dimensions most copy tests are designed to assess fall into a few categories:

- Comprehension: Does the audience understand what the ad is saying? This sounds basic. It is not. Ads that are internally obvious to the team that made them are frequently opaque to the people they are aimed at.

- Recall: Does the audience remember the ad, and more importantly, do they remember the brand? Recall without brand linkage is one of the most common and expensive failures in advertising.

- Resonance: Does the message connect emotionally or rationally with the target audience? This is where qualitative methods tend to outperform quantitative ones.

- Persuasion: Does exposure to the ad shift purchase intent, brand preference, or consideration? This is the metric most directly connected to commercial outcomes, and it is the one most frequently underweighted in standard copy testing protocols.

- Distinctiveness: Does the creative stand out in the context where it will actually appear? An ad that tests well in isolation can disappear in a cluttered media environment.

The mistake most teams make is optimising for recall and comprehension while treating persuasion as a secondary concern. Recall is easy to measure and easy to report. Persuasion is harder to isolate and harder to defend in a presentation. But persuasion is the one that connects to revenue.

If you are building or refining your broader go-to-market approach, the principles behind effective copy testing connect directly to how you structure market entry and commercial positioning. The Go-To-Market and Growth Strategy hub covers those foundations in more depth.

Pre-Testing vs Post-Testing: Two Different Jobs

Pre-testing happens before a campaign goes live. Post-testing happens after. They are not interchangeable, and treating them as variations of the same activity is one of the more common structural errors I see in how marketing teams approach research.

Pre-testing is a risk management tool. Its job is to identify creative that is likely to fail before you spend money running it at scale. It is not designed to predict exact performance. It is designed to catch the obvious problems: the message that does not land, the brand that does not register, the tone that alienates the audience you are trying to reach. When I was running agencies, pre-testing was the conversation I had to have with clients who were confident in their creative but had not spoken to a single customer about it. The resistance was always the same. The justification for doing it anyway was always the same: it is cheaper to find out now than after the media buy.

Post-testing is a learning tool. Its job is to understand what actually happened in market, why it happened, and what you carry forward into the next campaign. Post-testing done well builds institutional knowledge. Post-testing done badly produces a report that explains why the metrics were not your fault.

The brands that get the most value from copy testing are the ones that treat pre-testing and post-testing as a connected loop, not two separate research projects. Pre-testing sets a hypothesis. Post-testing validates or challenges it. Over time, that loop produces a genuinely useful body of knowledge about what works for that brand with that audience in that category.

Qualitative vs Quantitative Methods: The False Choice

The debate about whether copy testing should be qualitative or quantitative has been running for decades. It is largely a false choice, and the teams that pick a side and stick to it tend to end up with blind spots they do not know they have.

Quantitative methods, things like monadic testing, paired comparison, and forced exposure surveys, give you scale and statistical confidence. You can run them with large samples, segment by audience, and produce numbers that hold up in a boardroom. Their weakness is that they tell you what people said, not why they said it. A message can score highly on comprehension because it is simple to the point of being generic. A message can score poorly on persuasion because the test environment does not replicate the context in which the ad would actually be seen.

Qualitative methods, focus groups, depth interviews, ethnographic approaches, give you texture and nuance. They tell you how people talk about the message, what associations it triggers, where the friction is. Their weakness is obvious: small samples, potential for group dynamics to distort responses, and results that are harder to defend to a finance director who wants a number.

The practical answer is sequencing. Use qualitative methods early to explore how the message lands and to surface issues you had not anticipated. Use quantitative methods to validate at scale once you have addressed the obvious problems. That sequence costs more than running a single method. It also produces better decisions, which is the point.

The BCG framework on commercial transformation in go-to-market strategy makes a related point about the cost of poor decision quality in marketing. The investment in better research upstream consistently outperforms the cost of fixing poor creative decisions downstream.

The False Positive Problem

The most dangerous copy test result is not a bad score. It is a good score on the wrong metric.

I have judged the Effie Awards, which means I have spent time looking at campaigns that were designed to drive commercial outcomes and assessed whether they actually did. One thing that becomes clear when you look at enough of that evidence is that there is a meaningful gap between ads that test well and ads that perform well in market. The gap is not random. It tends to cluster around a specific failure mode: creative that is memorable and likeable but does not connect the brand to a reason to buy.

This is the false positive problem. An ad can score highly on recall, comprehension, and even emotional resonance while doing very little to shift commercial intent. If your copy testing protocol is not explicitly measuring the connection between the message and a purchase-relevant outcome, you can walk out of a research debrief feeling confident about creative that will underperform in market.

The fix is not complicated. It requires adding a persuasion measure to your testing protocol and being honest about what the results mean. “People liked it” and “people are more likely to buy because of it” are different findings. Treat them as such.

Where Digital Has Changed the Game

Digital advertising has made certain kinds of copy testing faster and cheaper. It has also created new ways to misread the results.

A/B testing at the ad level is now standard practice for most performance marketing teams. You run two versions of a headline or a visual, measure click-through rate or conversion rate, and scale the winner. That process is genuinely useful. It is also, on its own, an incomplete picture of what your copy is doing.

The problem I spent years working through in performance marketing is the attribution gap. When I was managing large-scale paid search and social budgets, the temptation was to over-credit the last touchpoint and under-credit everything that had built the conditions for that click to convert. The ad that wins a direct response A/B test is not necessarily the ad that is building brand preference over time. Those two objectives sometimes pull in different directions, and optimising exclusively for the measurable one can quietly erode the less measurable one.

This connects to a broader point about how performance marketing is measured. Most of what gets credited to lower-funnel activity was going to happen anyway. The person who was already in the market, already considering your brand, was going to convert with or without the perfectly optimised ad copy. The harder and more commercially important question is whether your creative is building the audience that will be in market next quarter. That question is much harder to answer with a click-through rate.

Forrester’s research on go-to-market struggles across categories points to a consistent pattern: teams that optimise exclusively for in-market signals tend to underinvest in the brand-building work that creates those signals in the first place.

Building a Copy Testing Process That Actually Gets Used

The most common failure mode in copy testing is not methodological. It is organisational. Teams design a testing process that nobody follows because it adds three weeks to a timeline that was already tight, costs money that was not in the original budget, and produces findings that arrive after the creative has already been signed off.

When I was growing agencies, the testing processes that stuck were the ones that were designed around how decisions actually got made, not around how they were supposed to get made. That meant building testing into the brief stage rather than the production stage. It meant agreeing upfront on what a “pass” looks like before the results come in, so that the findings could not be reinterpreted after the fact. And it meant keeping the process lean enough that it did not become the thing everyone found a reason to skip when the schedule got tight.

A few principles that hold across different types of organisations and campaign types:

- Agree on the decision criteria before testing begins. What score on what metric would lead you to revise the creative? If you cannot answer that question before the research runs, the research will be used to confirm whatever the team already believes.

- Test in context where possible. An ad that is tested in isolation will behave differently from an ad that is seen in a feed, on a billboard, or in a commercial break. The closer your test environment is to the real environment, the more useful the results.

- Keep the sample representative, not just large. A large sample of the wrong audience is worse than a small sample of the right one. Copy testing with a panel that does not reflect your actual target market produces findings that are statistically strong and commercially irrelevant.

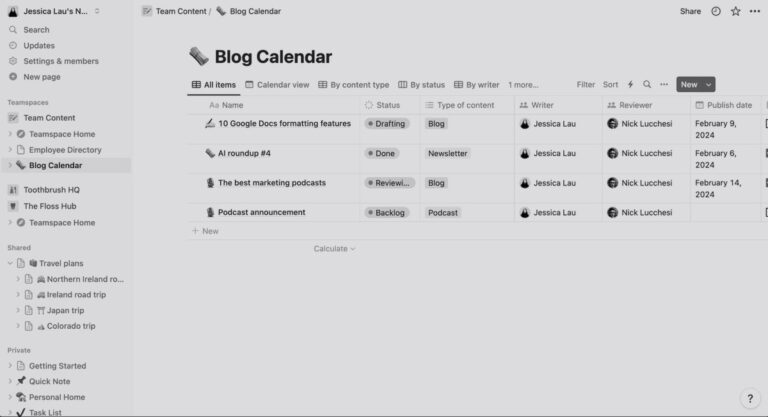

- Document what you learn. The brands that build genuine creative effectiveness over time are the ones that treat each copy test as a contribution to a growing body of knowledge, not a one-off project. That knowledge compounds. Teams that start from scratch on every campaign pay for the same lessons repeatedly.

The Semrush overview of market penetration strategy is a useful reference point here. The connection between how you communicate to existing audiences versus how you reach new ones is directly relevant to how you design copy tests, because the creative that works for penetration is often different from the creative that works for retention.

Copy Testing and the Broader Creative Strategy

Copy testing is not a substitute for creative strategy. It is a check on it. The distinction matters because there is a version of copy testing that becomes a mechanism for producing the most inoffensive possible advertising: creative that nobody hates, that scores adequately on every metric, and that nobody remembers three days after seeing it.

I have seen this happen in large organisations where the testing process had effectively become a veto mechanism for anything that felt risky. Every sharp edge got sanded off in research. Every distinctive idea got moderated into something safer. The result was advertising that was technically competent and commercially invisible.

The antidote is not to abandon testing. It is to be clear about what you are testing for and to hold the commercial objective as the primary filter. Distinctive, effective advertising can score poorly on certain qualitative measures, particularly with audiences who are not the target. If you are running copy tests on the wrong audience, or weighting the wrong metrics, you will consistently optimise toward the mean.

When I think about the campaigns that have genuinely moved commercial outcomes, the ones that I have seen evidence for across the brands and agencies I have worked with, they were rarely the ones that tested most safely. They were the ones that had a clear, specific, commercially relevant message aimed at a precisely defined audience, and that were tested against the right criteria from the start.

Creator-led campaigns, which are increasingly relevant to how brands reach new audiences, present their own copy testing challenges. The Later webinar on go-to-market with creators covers some of the nuances around testing creative that is built for authenticity rather than broadcast polish. The principles are the same; the execution looks different.

Understanding how copy testing fits into a broader commercial strategy is one of the areas covered across the Go-To-Market and Growth Strategy hub, alongside demand generation, positioning, and how to structure marketing investment for growth rather than just activity.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.