Customer Insight Hubs: One Source of Truth From Many Sources of Data

A customer insight hub is a centralised platform that aggregates behavioural, transactional, attitudinal, and third-party data into a single environment where marketing, product, and commercial teams can draw consistent conclusions. The goal is not to collect more data. The goal is to stop making decisions from five different dashboards that each tell a slightly different story.

Most organisations already have the raw material. They have CRM data, web analytics, survey responses, social listening outputs, support ticket themes, and sales call notes sitting in separate systems, interpreted by separate teams, producing separate and often contradictory pictures of the customer. A customer insight hub is the infrastructure that changes that.

Key Takeaways

- A customer insight hub consolidates data from multiple sources into one environment, but its value comes from interpretation and decision-making, not from the aggregation itself.

- The most common failure mode is building a technically impressive hub that nobody uses because it was designed for analysts, not for the commercial teams who need to act on it.

- Qualitative signals, including support tickets, sales call themes, and customer verbatims, are systematically underweighted in most insight architectures despite being the most diagnostically useful.

- Insight hubs only create competitive advantage when they shorten the time between a customer signal and a commercial decision. If the data sits in a platform and nobody changes anything, the hub is a cost, not an asset.

- Platform selection matters far less than governance. Who owns the insight? Who can challenge it? Who has the authority to act on it?

In This Article

- Why Most Companies Already Have an Insight Problem, Not a Data Problem

- What Sources Should Feed a Customer Insight Hub?

- How Customer Insight Hubs Actually Work in Practice

- The Qualitative Data Problem Nobody Wants to Talk About

- What the Insight Hub Should Actually Produce

- Common Failure Modes Worth Knowing Before You Build

- Choosing a Platform: What to Prioritise

- The Competitive Advantage Is in the Speed of the Feedback Loop

Why Most Companies Already Have an Insight Problem, Not a Data Problem

When I was running an agency and we onboarded a new client, one of the first things I would do is ask to see how they currently understood their customers. The answer was almost always the same. There would be a brand tracking study commissioned eighteen months ago, a Google Analytics account that nobody had properly configured, a Net Promoter Score reported quarterly by the CX team, and a sales director who had strong opinions about what customers wanted based on the accounts he personally managed.

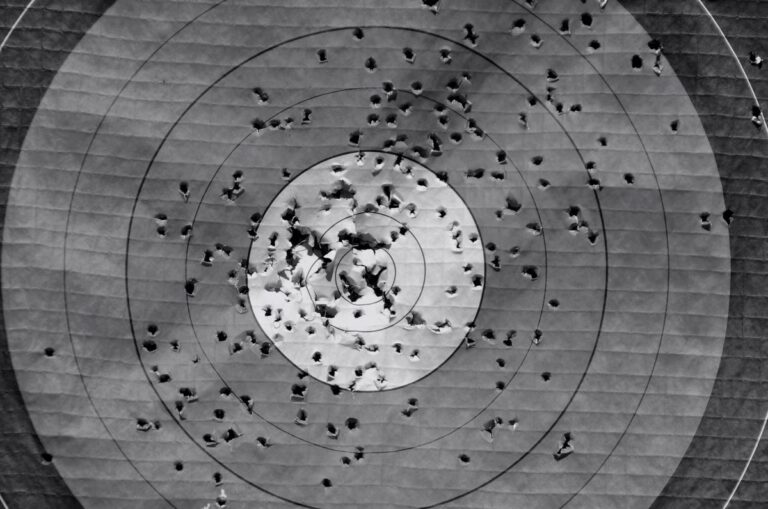

None of these were wrong. All of them were incomplete. And because they lived in separate parts of the organisation, nobody had ever reconciled them. The brand team believed one thing about customer sentiment. The performance team believed something different because the conversion data said something different. The sales team believed something else entirely. Everyone was right about their own slice and wrong about the whole.

This is the actual problem a customer insight hub is designed to solve. Not data scarcity. Data fragmentation.

If you are thinking through how insight infrastructure fits into a broader commercial growth strategy, the Go-To-Market and Growth Strategy hub covers the wider landscape of how organisations connect customer understanding to market execution.

What Sources Should Feed a Customer Insight Hub?

The answer depends on your business model, but there is a core set of source categories that most organisations should be pulling from. The mistake is treating this as a purely technical question. The more useful question is: what decisions do we need to make, and what data would make those decisions less speculative?

Behavioural data is the foundation. This includes web and app analytics, purchase history, product usage patterns, and email engagement. It tells you what customers actually do, which is frequently different from what they say they do. Behavioural data is high volume and relatively easy to collect, which is why it tends to dominate most insight environments at the expense of everything else.

Transactional data from your CRM or e-commerce platform gives you the commercial picture: what was bought, when, at what price, with what frequency, and with what lifetime trajectory. When this is connected to behavioural data, you can start to understand which behaviours predict high-value customers and which predict churn. Disconnected, it just tells you what happened, not why.

Attitudinal data comes from surveys, NPS programmes, customer interviews, focus groups, and review platforms. This is where you find out what customers think and feel, which is often the missing context that makes behavioural data interpretable. A customer who stops purchasing might show up as a churn signal in your transactional data. The attitudinal data might tell you it was a pricing issue, a product quality issue, or a service experience that could have been recovered.

Qualitative signal data is the most underused category in most organisations. This includes support ticket themes, sales call transcripts, live chat logs, and social media verbatims. These sources are messy, unstructured, and difficult to quantify, which is exactly why they tend to get deprioritised. But they are also where the most diagnostically useful information lives. When I have been in situations where we needed to understand why a product was underperforming, the answer was almost never in the dashboards. It was in what customers were actually saying to the people they spoke to.

Third-party and market data provides the external context. This includes competitive intelligence, category search trend data, and tools that track share of voice or pricing dynamics. Platforms that aggregate search and competitive signals can surface early indicators of category shifts that internal data alone would miss. The limitation is that third-party data tells you about the market, not about your specific customers, so it needs to be interpreted alongside internal sources rather than treated as a standalone signal.

How Customer Insight Hubs Actually Work in Practice

The technology architecture varies significantly depending on whether you are building something custom or using a dedicated platform. At one end of the spectrum, you have enterprise customer data platforms (CDPs) that handle identity resolution, data ingestion, and segmentation at scale. At the other end, you have organisations that have effectively built their own hub using a combination of a data warehouse, a BI tool, and a shared folder of survey outputs with a strong analyst in the middle holding it together.

Both approaches can work. Both can also fail. The technology is not the determining factor.

What determines whether a customer insight hub creates value is the governance model around it. Who is responsible for maintaining data quality? Who has the authority to challenge an insight that contradicts the prevailing commercial view? Who translates the outputs into decisions that someone actually implements?

I have seen organisations invest heavily in insight infrastructure and then watch it become a reporting function rather than a decision-making function. The hub produces outputs. The outputs get presented in quarterly reviews. The quarterly reviews produce action points. The action points get deprioritised. Nothing changes. The hub continues to produce outputs. This is not a technology failure. It is a governance failure.

The organisations that get genuine value from insight hubs tend to have a few things in common. They have a clear owner for the insight function, not just the platform. They have defined the decisions the hub is supposed to inform, rather than treating it as a general-purpose analytics environment. And they have created a culture where acting on customer data is expected, not optional.

The Qualitative Data Problem Nobody Wants to Talk About

There is a structural bias in most insight architectures toward data that is easy to quantify and easy to report. Web analytics data is clean, timestamped, and visualisable. Survey data can be scored and trended. Transactional data produces clear revenue numbers. All of this is genuinely useful. But it creates a systematic blind spot.

The things customers say in their own words, in support conversations, in sales calls, in social comments, in product reviews, are frequently the most diagnostic signals available to a business. They tell you not just what happened but why, and often what would need to change for the customer to behave differently. Quantitative data can tell you that 23% of customers who trialled a product did not convert. Qualitative data can tell you they all thought the onboarding was confusing and the pricing page was unclear.

One of the more useful things that has happened in the last few years is the development of tools that can process unstructured qualitative data at scale, using AI to surface themes, sentiment patterns, and emerging issues from large volumes of text. This does not replace the need for human interpretation, but it does make it more practical to include qualitative sources in an insight hub rather than treating them as a separate, informal input.

Feedback and behavioural analytics platforms have moved in this direction, combining session data with on-site survey responses to give a richer picture of what is happening and why. The principle is sound regardless of which tool you use: quantitative data tells you the what, qualitative data tells you the why, and you need both to make good decisions.

What the Insight Hub Should Actually Produce

This is where a lot of organisations lose the thread. They build the hub, connect the sources, and then measure success by the quality of the dashboards rather than the quality of the decisions. The output of an insight hub is not a report. It is a commercial decision informed by customer evidence.

In practical terms, this means the hub should be able to answer questions like: which customer segments are growing and which are contracting? What are the primary reasons customers choose us over alternatives, and are those reasons consistent with how we are positioning ourselves? Where in the customer experience are we creating friction that reduces lifetime value? Which acquisition channels are bringing in customers who actually stay?

These are not analytics questions. They are business questions that happen to be answerable with data. The distinction matters because it changes how you design the hub. If you design it to produce dashboards, you will get dashboards. If you design it to answer specific business questions, you will get decisions.

BCG’s work on aligning marketing and commercial functions makes a related point: the organisations that extract the most value from customer data are those where insight is treated as a shared commercial resource rather than a function owned by one team. When the insight hub belongs to marketing, sales ignores it. When it belongs to data science, marketing finds it inaccessible. The most effective setups create shared ownership with clear accountability.

Common Failure Modes Worth Knowing Before You Build

Having watched a number of these initiatives from the inside, the failure patterns are fairly consistent.

Building for analysts instead of decision-makers. The hub gets designed by people who understand data, for people who understand data. The commercial leaders who need to act on the outputs find it too complex or too granular to be useful in the context of their actual work. Usage drops. The hub becomes a tool for reporting rather than for decision-making.

Treating integration as the finish line. Getting all the data sources connected is a significant technical achievement. It is also not the point. I have seen organisations spend twelve months on integration and then have no plan for what to do with the integrated data. The integration is infrastructure. The insight is what you build on top of it.

Ignoring data quality at source. A hub that aggregates bad data from multiple sources does not produce better insight. It produces confidently presented bad insight, which is worse than having no hub at all because it gives decision-makers false confidence. Before connecting sources, it is worth auditing whether those sources are actually reliable. CRM data that sales teams have not maintained properly is a common culprit.

No mechanism for the insight to change anything. This is the governance failure I described earlier. The hub produces outputs. The outputs are interesting. Nothing happens. If there is no defined process for insight to reach the people who can act on it, and no expectation that those people will act on it, the hub is an expensive reporting exercise.

Forrester’s analysis of intelligent growth models identifies a similar pattern: organisations that invest in customer intelligence but fail to embed it in commercial decision-making processes consistently underperform those that do, regardless of the sophistication of the technology they are using.

Choosing a Platform: What to Prioritise

Platform selection is a downstream decision. The upstream decisions are: what data sources do you need to connect, what questions do you need to answer, and who needs to use the outputs? Once those are clear, the platform choice becomes more straightforward because you are evaluating against specific requirements rather than general capabilities.

For organisations with relatively mature data infrastructure and dedicated analytics resource, a composable approach using a data warehouse, a transformation layer, and a BI tool on top can give you more flexibility than a pre-packaged CDP. The trade-off is implementation complexity and ongoing maintenance.

For organisations that need faster time to value and do not have the internal technical resource to build and maintain a custom stack, a purpose-built customer insight platform makes more sense. The trade-off is usually cost and the constraints of working within a vendor’s data model.

The growth hacking and analytics tool landscape has expanded considerably, and real-world examples of how companies have used data platforms to drive growth are worth reviewing before committing to a direction. The patterns that work tend to involve tight feedback loops between customer signals and commercial decisions, regardless of the specific tools involved.

What I would caution against is selecting a platform because it is impressive in a demo. The most sophisticated platforms tend to be the ones that collect the most data and produce the most dashboards. Neither of those things is the same as producing better decisions. Evaluate on the basis of whether the outputs are usable by the people who need to use them, not on the basis of feature counts.

The Competitive Advantage Is in the Speed of the Feedback Loop

There is a version of customer insight infrastructure that is a hygiene factor. You need to understand your customers at a basic level to run a functional marketing operation. Most organisations have this, imperfectly.

The version that creates genuine competitive advantage is different. It is not about having more data or better dashboards. It is about shortening the time between a customer signal and a commercial response. A business that can detect a shift in customer behaviour or sentiment in days and adjust its approach accordingly has a structural advantage over a business that detects the same shift in the next quarterly review.

Vidyard’s research on pipeline and revenue potential for go-to-market teams points to a consistent theme: the organisations leaving the most revenue on the table are those with slow or fragmented feedback loops between customer engagement signals and commercial team responses. The insight hub is one part of fixing that. The other part is the culture and process that makes acting on signals the default rather than the exception.

I spent time early in my career at an agency where we were genuinely close to client customers because the work demanded it. When we got it right, it was because the insight was specific, recent, and connected to a decision someone had the authority to make. When we got it wrong, it was usually because the insight was general, dated, or disconnected from the people who could act on it. The technology has changed considerably since then. The underlying dynamic has not.

If you want to go deeper on how customer insight connects to market positioning, channel strategy, and commercial planning, the Go-To-Market and Growth Strategy hub covers those intersections in detail.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.