Generative AI in Analytics: What It Can Do and Where It Falls Short

Generative AI in analytics refers to the application of large language models and generative AI systems to the interpretation, summarisation, and communication of data. Instead of requiring analysts to write queries, build dashboards, or translate raw numbers into narrative, generative AI tools can do that work on demand, in plain English, in seconds.

The practical upshot is that more people in a business can ask questions of their data without needing a data team to mediate every request. That is genuinely useful. But the gap between what these tools promise and what they reliably deliver is still wide enough to matter, and senior marketers should understand both sides before committing budget or workflow to them.

Key Takeaways

- Generative AI in analytics speeds up the translation of data into narrative, but it does not improve the quality of the underlying data or the questions being asked of it.

- The biggest commercial value is in reducing analyst bottlenecks, not in generating insight that experienced analysts could not produce themselves.

- AI-generated analysis inherits all the biases and gaps in your data model, and presents them with the same confidence as accurate findings.

- The organisations getting the most from these tools have invested in data infrastructure first, and are using AI to surface what is already well-structured.

- Generative AI changes who can access analytics, not what good analysis looks like. That distinction matters for how you deploy it.

In This Article

- What Does Generative AI Actually Do in an Analytics Context?

- Where Does It Actually Reduce Friction for Marketing Teams?

- What Are the Limitations That Vendors Will Not Lead With?

- How Should Marketing Leaders Think About Integrating These Tools?

- What Does Good Look Like in Practice?

- Is the Hype Ahead of the Reality?

There is a broader conversation happening about where AI delivers genuine commercial value in marketing versus where it adds theatre without substance. If you want the wider context, the AI Marketing hub at The Marketing Juice covers the full landscape, from tools and infrastructure through to strategy and measurement.

What Does Generative AI Actually Do in an Analytics Context?

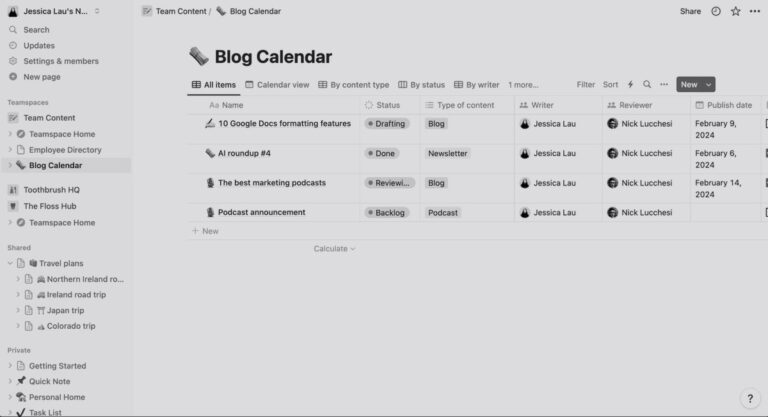

The core capability is natural language querying. You type a question, the system interprets it, pulls from a connected data source, and returns an answer in plain English, often with a chart or table attached. Tools like Microsoft Copilot in Power BI, Google’s Duet AI in Looker, and a growing number of standalone products are all built around this interaction model.

Beyond querying, generative AI can summarise dashboards, flag anomalies, generate written commentary on performance data, and draft the kind of weekly reporting narrative that used to eat two hours of a junior analyst’s time every Monday morning. That last one is not trivial. I have run agencies where the reporting burden alone was consuming meaningful analyst capacity, capacity that could have been spent on actual interpretation rather than formatting numbers into slides.

There is also a generation layer that goes further: tools that can propose hypotheses about why a metric has changed, suggest segments to investigate, or recommend next steps based on observed patterns. This is where the marketing gets louder and the reality gets murkier, because these capabilities are highly dependent on data quality, model training, and the specificity of the business context the tool has access to.

For a grounding view of where AI optimisation tools currently sit in the market, Semrush’s overview of AI optimisation tools is worth reading alongside vendor material, because it gives you a more measured picture of what is production-ready versus what is still largely aspirational.

Where Does It Actually Reduce Friction for Marketing Teams?

The clearest win is access. In most organisations I have worked with, data access has been gated by analyst availability. A marketing director wants to know whether last week’s campaign drove incremental revenue or just captured demand that would have converted anyway. Getting that answer has historically meant raising a request, waiting for capacity, and receiving a response three days later when the decision has already been made on gut feel.

Generative AI changes that dynamic. When it works well, it puts exploratory querying in the hands of the person who needs the answer, at the moment they need it. That is a genuine operational improvement, not a marginal one.

The second area is reporting automation. Pulling together weekly or monthly performance commentary is one of the most time-consuming and least intellectually demanding tasks in a marketing analytics function. Generative AI handles it competently. It will not write the commentary that changes how the board thinks about the business, but it will produce a serviceable first draft that someone with context can refine in ten minutes rather than build from scratch in two hours.

Third, and less discussed, is onboarding. When new people join a team and need to get up to speed on historical performance, generative AI tools that are connected to your data can dramatically shorten that ramp. Instead of sitting through three weeks of dashboard walkthroughs, a new analyst can interrogate the data directly and build their own mental model of the business faster.

What Are the Limitations That Vendors Will Not Lead With?

The first limitation is data quality dependency. Generative AI does not improve your data. It surfaces what is there, and it does so fluently and confidently, which is exactly the problem when what is there is incomplete, inconsistently tagged, or structurally flawed. I have sat in rooms where a beautifully formatted AI-generated summary was presented as insight, when anyone who knew the underlying data model would have recognised immediately that the numbers were comparing unlike things.

The confidence of the output does not correlate with its accuracy. That is a significant risk in organisations where the people consuming the analysis do not have the technical background to interrogate it. A junior marketer asking a generative AI tool why conversion rate dropped last week may get a plausible-sounding answer that is directionally wrong, because the tool is pattern-matching on available signals rather than understanding causality.

The second limitation is context. Generative AI tools do not know your business. They do not know that your November spike is always driven by a single enterprise renewal, not organic demand. They do not know that your paid social numbers look flat because you shifted budget to a channel that is tracked differently. They do not know that the metric your CEO cares about is a proxy that your team has quietly agreed is unreliable. That institutional knowledge lives in people, and it is not something you can upload to a model.

Third, there is the question of what questions get asked. Generative AI is reactive. It answers the questions you think to ask. The most valuable analytical work I have seen in 20 years of marketing has consistently come from analysts who noticed something that nobody thought to ask about, who spotted the anomaly in a metric that was not on anyone’s dashboard, who made a connection between two data sets that had never been looked at together. That kind of proactive, curious, structurally creative thinking is not what these tools do.

HubSpot has a useful piece on generative AI risks that, while focused on security, raises broader questions about data governance and trust that apply directly to analytics use cases.

How Should Marketing Leaders Think About Integrating These Tools?

Start with the infrastructure question, not the tool question. The organisations I have seen get the most from generative AI in analytics are the ones that already had clean, well-governed data. They were not using AI to fix a data problem. They were using it to make a functioning data environment more accessible. If your data is messy, adding a generative AI layer on top will not clean it. It will make the mess harder to see.

When I was building out the analytics function at a performance agency, one of the first things we did was audit what data we actually trusted versus what we were reporting because we had always reported it. That audit was uncomfortable, but it was the foundation that made everything else useful. Generative AI tools would have made that audit harder to do, not easier, because they would have continued to surface the untrustworthy data with the same confident presentation as the reliable data.

The second consideration is role design. Generative AI in analytics changes what analysts spend their time on, but it does not eliminate the need for analytical thinking. The risk is that organisations see these tools as a headcount reduction opportunity rather than a productivity multiplier. In my experience, the teams that cut analytical capacity on the back of AI adoption tend to discover, six months later, that the quality of their decision-making has degraded in ways that are difficult to attribute directly but are very real.

What you want is analysts who are freed from the mechanical work of data extraction and report formatting, and who can spend more time on interpretation, hypothesis generation, and the kind of qualitative context that no AI tool currently provides. That is a different job description, not a smaller headcount.

Third, build in verification habits from the start. When a generative AI tool produces an insight, the default response should not be to accept it and act on it. The default response should be to ask what data it is drawing on, whether that data is reliable, and whether the interpretation makes sense given what you know about the business. That sounds obvious, but in practice, the fluency of AI-generated output creates a psychological tendency to treat it as authoritative. Building a culture where people interrogate AI outputs the same way they would interrogate any other analysis is not easy, but it is necessary.

What Does Good Look Like in Practice?

The best implementations I have seen share a few characteristics. They have a clear use case, not a general ambition to “use AI in analytics.” They have data infrastructure that is already reasonably clean. They have human oversight built into the workflow, not bolted on as an afterthought. And they have set expectations internally about what the tool is for and what it is not for.

A practical example: a marketing team that uses generative AI to produce the first draft of its weekly performance commentary, which a senior analyst then reviews, contextualises, and signs off before it goes to the leadership team. That is a sensible use of the technology. The AI handles the mechanical work. The human adds the judgment. The output is faster and the analyst’s time is spent on the part that actually requires expertise.

Compare that to a team that uses generative AI to answer ad hoc strategic questions without any human review, and where the outputs feed directly into budget decisions. That is a higher-risk configuration, and the risk is not theoretical. I have seen decisions made on the back of AI-generated analysis that turned out to be based on a misinterpretation of the data model, and the cost of those decisions was not small.

The Semrush piece on future trends in AI optimisation software is worth reading for a sense of where the tooling is heading, particularly around autonomous analysis and real-time decision support. The trajectory is toward more autonomy, which makes the governance question more important, not less.

Is the Hype Ahead of the Reality?

In most organisations, yes. Not because the technology is not capable, but because the conditions required for it to deliver on its promise are not yet in place. Clean data, clear use cases, human oversight, and a culture of analytical rigour are prerequisites, not nice-to-haves. And in most marketing functions, at least one of those prerequisites is missing.

That said, the direction of travel is clear. The tools are improving quickly, the integration with existing data platforms is getting tighter, and the cost of access is falling. The question is not whether generative AI will be a meaningful part of marketing analytics in three years. It will be. The question is whether your organisation is investing in the foundations that will let you use it well, or whether you are buying the tool before you have done the groundwork.

Early in my career, I asked for budget to build a new website and was told no. So I taught myself to code and built it. The lesson I took from that was not that resourcefulness always beats budget. It was that understanding the underlying mechanics of a tool gives you a fundamentally different relationship with it than treating it as a black box. The same principle applies here. Marketers who understand what generative AI is actually doing with their data, and where its reasoning can break down, will use it better than those who treat it as an oracle.

Ahrefs has been running a useful series of AI tools webinars that take a similarly grounded view of where AI is genuinely useful versus where it is still catching up to the marketing around it. Worth bookmarking if you are tracking this space seriously.

For more on how AI is reshaping marketing practice beyond analytics, including where the genuine commercial opportunities are and where the noise outweighs the signal, the AI Marketing section of The Marketing Juice covers the territory in depth.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.