Cross-Department Alignment Is Your Cheapest CRO Lever

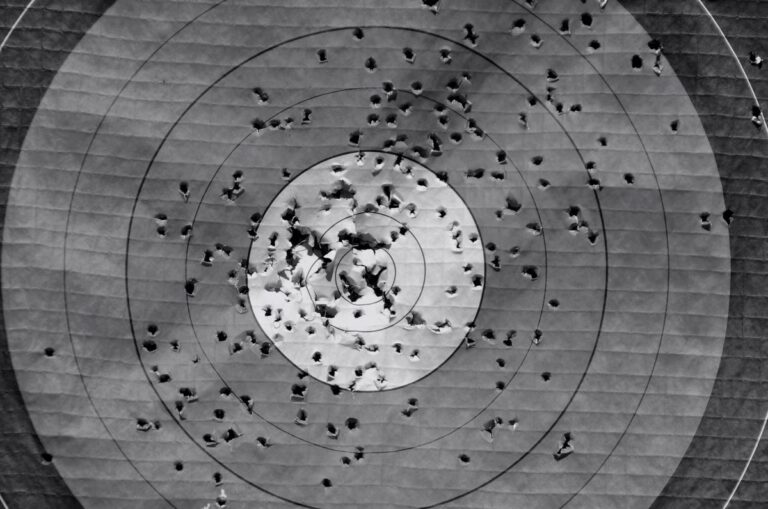

Cross-department alignment improves sales by removing the friction that sits between your marketing funnel and your revenue line. When sales, marketing, product, and customer success operate from the same data, the same definitions, and the same goals, conversion rates improve not because you changed a button colour but because the entire customer experience becomes coherent.

Most CRO programmes focus on the page. The bigger opportunity is usually in the organisation.

Key Takeaways

- Misalignment between departments is a conversion problem, not just an operational one. Disconnected teams create friction that kills deals before CRO tools can measure it.

- A shared definition of a qualified lead is worth more than most A/B tests. When sales and marketing disagree on what good looks like, no amount of landing page optimisation closes that gap.

- Page-level CRO captures the last few percentage points. Structural alignment across departments captures the larger losses that happen earlier and go untracked.

- Speed of follow-up is one of the most measurable and most neglected conversion variables. It lives in sales operations, not in your CRO tool.

- The teams closest to customer objections, sales and support, hold the insight that makes CRO testing smarter. Most organisations never formally route that intelligence back to marketing.

In This Article

- What Does Cross-Department Alignment Actually Mean in a CRO Context?

- Why Sales and Marketing Misalignment Is a Conversion Problem, Not Just a Culture Problem

- The Conversion Losses That Happen Between Departments

- How Customer-Facing Teams Hold the Insight That Makes CRO Smarter

- What Aligned Teams Actually Do Differently

- The Measurement Problem That Misalignment Creates

- Where to Start If Your Organisation Is Not Aligned

I spent years running agencies where the performance team and the creative team operated as if they were in different companies. The performance team wanted faster landing pages and tighter copy. The creative team wanted brand consistency and considered design. Neither was wrong. But the lack of a shared framework meant we were optimising against each other instead of against the market. Conversion rates were acceptable. They should have been excellent.

What Does Cross-Department Alignment Actually Mean in a CRO Context?

It means that every team touching the customer experience, from the first ad impression to the post-sale onboarding email, is working from the same understanding of what success looks like, what the customer needs at each stage, and what the data is telling them.

In practice, this is rare. Most organisations have marketing defining success as leads generated, sales defining success as deals closed, and product defining success as features shipped. These are not the same thing, and when they diverge, the customer feels it.

The core principles of conversion rate optimisation have always included understanding the customer’s intent and removing barriers to action. But the barriers that matter most are often not technical. They are organisational. A slow sales follow-up. An onboarding process that contradicts the marketing promise. A pricing page that sales has already discounted verbally before the prospect even clicks.

If you want a broader view of how conversion optimisation fits into commercial strategy, the CRO and Testing hub covers the full picture, from testing methodology to funnel structure to the measurement traps that distort decision-making.

Why Sales and Marketing Misalignment Is a Conversion Problem, Not Just a Culture Problem

The sales and marketing alignment conversation has been happening for thirty years. It usually gets framed as a cultural issue, two teams that do not communicate well, do not trust each other’s numbers, and do not attend the same meetings. That framing is accurate but incomplete.

The conversion cost of misalignment is concrete and measurable. Marketing sends leads to sales that sales considers unqualified. Sales ignores them. Marketing reports strong lead volume. Sales reports a weak pipeline. Both are telling the truth about their own metrics. Neither is telling the truth about the business.

I ran a turnaround at an agency that had exactly this problem embedded in its reporting structure. The marketing team was hitting its lead targets every month. The sales team was missing its revenue targets every quarter. When I sat both teams down and asked them to define a qualified lead, I got four different answers in the room. We had been optimising the funnel based on a definition that sales had never agreed to. Every landing page test, every form optimisation, every bid adjustment, was pointed at the wrong target. Fixing the definition was worth more than six months of A/B testing.

A shared lead definition is not a soft operational nicety. It is the foundation on which all conversion data sits. Without it, your CRO programme is optimising a metric that does not map to revenue.

The Conversion Losses That Happen Between Departments

Most CRO tools are excellent at measuring what happens on a page. They are not designed to measure what happens between teams. That gap is where a significant share of conversion loss sits.

Consider the handoff from marketing to sales. A prospect fills in a form. Marketing records a conversion. What happens next is almost entirely outside the CRO tool’s visibility. How quickly does sales follow up? What is the quality of that first contact? Does the sales script align with what the ad promised? Does the salesperson know which campaign the lead came from, and what that implies about their intent?

Speed of follow-up is one of the most consistently underestimated conversion variables in B2B. The difference between contacting a lead within five minutes and contacting them within five hours is not marginal. It is often the difference between a live prospect and a cold one. That variable lives entirely in sales operations, not in your CRO platform.

The same logic applies to the handoff from sales to product or customer success. If a customer buys based on a promise that the product cannot yet deliver, churn follows. That churn feeds back into lifetime value, which feeds back into the economics of acquisition. The conversion problem and the retention problem are the same problem viewed from different ends of the funnel.

Understanding what bounce rate actually measures is a useful reminder that metrics only capture a partial view of behaviour. The same caveat applies to conversion rate. It measures a click or a form submission. It does not measure whether the promise was kept.

How Customer-Facing Teams Hold the Insight That Makes CRO Smarter

The people who know the most about why prospects do not convert are not in your analytics platform. They are in your sales team and your customer support function. They hear the objections. They see the hesitation. They know which questions come up on every call and which promises land flat.

Most organisations never formally route that intelligence back to marketing or to the CRO programme. Sales calls it anecdotal. Marketing calls it noise. Both are wrong. It is the richest qualitative signal available, and it costs nothing to collect if the process exists to capture it.

When I was growing an agency from around twenty people to close to a hundred, one of the structural decisions that paid off most was creating a standing monthly session where account managers, sales leads, and the performance team sat in the same room and went through what clients were actually saying. Not what the dashboards said. What people said. The insight that came out of those sessions shaped our landing page strategy, our ad copy, and our offer structure more than any test we ran in isolation.

The right approach to CRO has always involved understanding the customer before touching the page. The fastest route to that understanding is the team that talks to customers every day. Building a formal process to extract and use that knowledge is not complicated. It is just rarely prioritised.

Heatmaps and session recordings add another layer to this. Behavioural data from tools like Hotjar can show you where attention drops off on a page, but it cannot tell you why a prospect who looked engaged still did not buy. That answer is usually in a sales call transcript, not a scroll map.

What Aligned Teams Actually Do Differently

Alignment is not a meeting cadence. It is a set of shared practices that produce better commercial decisions. Here is what it looks like when it is working.

First, there is a single agreed definition of a qualified lead, reviewed and updated when market conditions change. Marketing is not optimising for volume against a definition that sales has already quietly abandoned.

Second, the sales team has visibility into which campaigns and messages drove each lead. This is not just a reporting nicety. It changes the conversation a salesperson has with a prospect. If you know someone came through a specific campaign about a specific pain point, you lead with that. If you are flying blind, you run a generic discovery process and lose the momentum the marketing already built.

Third, objections and drop-off reasons are captured systematically and fed back into content, landing page, and offer strategy. This closes the loop between what marketing assumes customers care about and what customers actually care about.

Fourth, product and customer success are included in the conversation about what is being promised in marketing. This sounds obvious. In most organisations, it does not happen. Marketing writes the promise. Sales amplifies it. Product ships something that partially delivers on it. Customer success manages the gap. The gap is a conversion problem for every future customer who talks to someone who has already experienced it.

Page-level improvements, faster load times, cleaner forms, stronger calls to action, still matter. Page speed has a direct relationship with conversion rate, and it is worth fixing. But those improvements operate at the margin when the structural alignment is broken. Fix the structure first.

The Measurement Problem That Misalignment Creates

One of the quieter consequences of departmental misalignment is that it corrupts measurement. When teams are not sharing data or definitions, each team optimises its own metrics and reports its own version of performance. The organisation ends up with a collection of accurate-but-incomplete pictures and no coherent view of what is actually working.

I have judged marketing effectiveness awards and reviewed hundreds of case studies. The ones that impress are almost never the ones with the cleverest tactic. They are the ones where the organisation has a clear line of sight from marketing activity to business outcome, and where every team involved is telling the same story from the same data. That coherence is not accidental. It is structural.

The measurement problem also affects testing. If your A/B tests are measuring form completions but your sales team is closing a different percentage of leads from each variant, you may be optimising for the wrong outcome. A variant that generates more leads but lower-quality leads is not a winner. You only know that if sales data is connected to marketing test data, which requires the two teams to be working from the same system and the same definitions.

Multivariate testing and behavioural tools like heatmaps add depth to conversion analysis, but they are only as useful as the outcome metric they are connected to. If that metric stops at the form submission and does not follow the lead through to closed revenue, you are measuring activity, not performance.

Where to Start If Your Organisation Is Not Aligned

The temptation when diagnosing conversion problems is to start with the page. That is the wrong place to start if the underlying problem is organisational.

Start with the data. Pull your lead-to-close rate by source, by campaign, and by lead type. If you cannot do that because the data is not connected, that is your first finding. The inability to answer a basic commercial question about which marketing activity produces closed revenue is itself a symptom of misalignment.

Then talk to sales. Not a survey. A conversation. Ask them what they hear from prospects. Ask them which leads they consider worth their time and which they do not. Ask them what marketing could do differently that would make their job easier. You will hear things that no analytics tool will ever surface.

Then look at the handoff process. How does a lead move from marketing to sales? How quickly? With what information attached? What happens to leads that sales does not immediately contact? These are process questions, not technology questions. They have process answers.

Reducing bounce rates and improving engagement are worth pursuing once the structural issues are addressed. Reducing bounce rate is a legitimate conversion lever, but it is most valuable when the traffic that stays is actually the traffic you want, which is a targeting and messaging question that lives upstream of the page.

There is no shortcut here. The organisations that convert best are the ones where the commercial function operates as a connected system, not a collection of departments that hand off to each other and hope for the best. Building that system takes time, requires senior sponsorship, and involves some uncomfortable conversations about whose metrics have been misleading everyone. It is worth it.

For more on how conversion optimisation connects to wider commercial performance, the CRO and Testing hub covers testing strategy, funnel measurement, and the common mistakes that undermine otherwise sound programmes.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.