Digital Transformation and the Customer Experience Gap

Digital transformation is reshaping customer experience by giving businesses the tools to understand, anticipate, and respond to customers faster and more precisely than was possible a decade ago. But the gap between companies that are genuinely improving experience through digital investment and those that are just digitising old problems is wider than most boardrooms want to admit.

The technology is rarely the hard part. What separates the businesses getting real returns from digital transformation is how they connect new capabilities to actual customer outcomes, not just internal efficiency metrics.

Key Takeaways

- Digital transformation only improves customer experience when it is built around customer needs, not internal process optimisation dressed up as CX investment.

- Personalisation at scale is now a baseline expectation, not a differentiator. The gap is in execution quality, not ambition.

- Omnichannel consistency matters more than any single channel being exceptional. Customers notice friction between touchpoints before they notice polish within them.

- Data is only valuable if it changes decisions. Most businesses collect far more than they act on, which creates cost without creating experience improvement.

- Speed of response, across digital channels, has become a primary driver of customer satisfaction, often outweighing the quality of the response itself.

In This Article

- What Does Digital Transformation Actually Mean for Customer Experience?

- Why Personalisation Has Become the Core Battleground

- How Digital Tools Are Changing the Speed of Service

- The Data Problem Nobody Wants to Talk About

- Omnichannel Consistency: Where Most Transformations Fall Short

- How to Measure Whether Digital Transformation Is Actually Improving CX

- The Businesses Getting This Right

I spent years watching clients invest heavily in digital transformation programmes that produced impressive internal dashboards and almost no visible change in how customers felt about the brand. The pattern was consistent: technology selected before the problem was properly defined, implementation measured by delivery milestones rather than customer outcomes, and CX treated as a downstream benefit rather than the primary design constraint. If you want to understand what genuinely effective customer experience looks like across the full spectrum, the Customer Experience hub covers the strategic and operational dimensions in detail.

What Does Digital Transformation Actually Mean for Customer Experience?

The phrase gets used to cover everything from replacing a legacy CRM to rebuilding an entire service model around digital-first interactions. That breadth is part of the problem. When transformation means everything, it is hard to measure whether it is working.

For customer experience specifically, digital transformation tends to operate across three layers. The first is visibility: the ability to see what customers are doing, where they are dropping off, what they are asking, and how they are feeling about interactions. The second is responsiveness: the ability to act on that information quickly, whether through automated personalisation, faster service resolution, or proactive outreach. The third is consistency: ensuring that the experience holds together across every channel a customer uses, from a first search to a post-purchase support request.

Most transformation programmes get the first layer right and struggle with the other two. Data collection is relatively straightforward. Doing something useful with it, at speed, across a coherent omnichannel experience, is where the real operational challenge sits. Understanding what omnichannel customer experience requires in practice is a useful starting point for any team trying to close that gap.

Why Personalisation Has Become the Core Battleground

When I was at lastminute.com running paid search campaigns in the early 2000s, personalisation was a competitive advantage because almost nobody was doing it well. We could generate six figures of revenue from a single well-targeted campaign because the bar was low and the signal was clear. That era is long gone. Personalisation is now table stakes, and the competitive advantage has shifted to the quality and subtlety of execution.

Customers today expect that a brand knows who they are, what they have bought before, and what they are likely to need next. They do not expect perfection, but they notice when they are treated like a stranger by a brand they have been loyal to for years. That disconnect, being asked to re-enter information a company already holds, receiving irrelevant recommendations, getting generic communications after a very specific purchase, is one of the fastest ways to erode trust.

Digital transformation enables personalisation at scale through better data integration, machine learning applied to behavioural signals, and the ability to serve different experiences to different segments in real time. But the technology only works if the underlying data is clean, the segments are meaningful, and the personalisation logic is actually tested against customer response rather than assumed to be working.

I have seen too many personalisation programmes that were technically impressive and commercially inert. The engine was running, but nobody had checked whether the outputs were actually improving conversion or satisfaction. Digital optimisation across the customer experience requires continuous testing, not a one-time configuration. That discipline is what separates the businesses genuinely benefiting from personalisation investment from those that have built expensive infrastructure and declared victory.

How Digital Tools Are Changing the Speed of Service

Speed has always mattered in customer service. What has changed is the baseline expectation. Customers who can get an answer from a competitor in 30 seconds via live chat are not going to wait 48 hours for an email response from you. Digital transformation has compressed the acceptable response window dramatically, and businesses that have not invested in the infrastructure to meet that expectation are paying for it in satisfaction scores and churn.

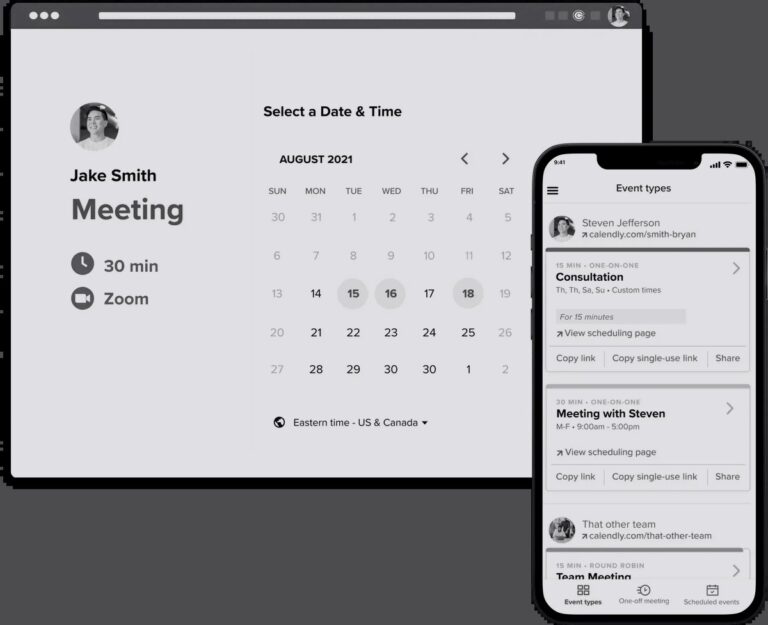

The tools that have had the most measurable impact on service speed are not the most glamorous ones. Properly configured knowledge bases that surface the right answer quickly. Chat systems that route queries to the right agent without customers having to repeat themselves. Video-based support that allows complex issues to be resolved in a single interaction rather than a chain of back-and-forth messages. Video support tools have shown particular promise in reducing resolution time for technical and product-related queries, where written communication creates more ambiguity than it resolves.

AI-assisted service is the current frontier, and it is producing genuinely mixed results. Where it works well is in handling high-volume, low-complexity queries at speed: order status, account information, basic troubleshooting. Where it tends to fail is in anything that requires nuance, empathy, or the kind of flexible problem-solving that a good human agent brings instinctively. The businesses getting the balance right are using AI to handle the routine so that human agents can focus on the interactions that actually require human judgment.

The Data Problem Nobody Wants to Talk About

Every digital transformation creates more data. That is not the same as creating more insight. I have worked with organisations that were drowning in customer data, running dozens of analytics tools across their stack, and still making major product and experience decisions based on gut feel because nobody trusted any single data source enough to act on it.

The data problem in CX is not usually a volume problem. It is a quality, integration, and interpretation problem. Customer data sitting in separate systems, not connected to each other, produces a fragmented picture of customer behaviour that makes it almost impossible to understand the full experience. A customer who complains on social media, calls the support line the same day, and then quietly churns three weeks later may appear in three separate datasets with no connection between them.

Measuring customer experience effectively requires agreeing on what matters before you start collecting. The metrics that actually reflect CX quality tend to be a combination of transactional signals, like resolution rate and repeat contact, and relationship signals, like NPS and customer effort score. Neither category alone gives you the full picture. And both require honest interpretation rather than the kind of selective reading that makes dashboards look good without improving anything.

Early in my agency career, I asked the managing director for budget to rebuild our website. The answer was no. So I taught myself to code and built it anyway. The lesson I took from that was not about resourcefulness, though it was partly that. It was about the difference between having data and doing something with it. I had the information I needed: the site was holding us back commercially. The gap was the willingness to act on it. That same gap exists in most CX data programmes today.

Omnichannel Consistency: Where Most Transformations Fall Short

Customers do not experience your channels in isolation. They move between them, sometimes within a single interaction. They might research on mobile, purchase on desktop, and contact support through a messaging app. If those experiences are not connected, the friction compounds at every transition point.

The challenge with omnichannel consistency is that it requires coordination across teams and systems that were often built independently, with different owners, different metrics, and different definitions of success. The marketing team owns the acquisition experience. The product team owns the in-app experience. The service team owns post-purchase support. Each can be optimised in isolation and still produce a disjointed experience for the customer moving between them.

Digital transformation creates the technical possibility of a connected experience. It does not automatically create the organisational alignment required to deliver one. That alignment, shared data, shared metrics, shared accountability for the end-to-end experience, is the operational challenge that most transformation programmes underestimate. Forrester’s work on CX maturity has consistently pointed to this integration gap as one of the primary reasons transformation investments fail to produce the expected experience improvements.

How to Measure Whether Digital Transformation Is Actually Improving CX

This is where a lot of organisations get into trouble. They measure the inputs, the technology deployed, the features launched, the channels activated, rather than the outputs. A new self-service portal is not a CX improvement unless customers are actually using it, finding it useful, and contacting support less as a result.

The metrics that matter most are the ones that reflect actual customer behaviour and sentiment, not proxy measures of digital activity. Measuring customer satisfaction rigorously means combining quantitative signals, like CSAT scores, first contact resolution, and churn rate, with qualitative signals, like verbatim feedback and direct customer conversations. Neither alone is sufficient.

I spent time judging the Effie Awards, which evaluate marketing effectiveness rather than creative quality. The discipline required to demonstrate effectiveness in that context, showing a clear line between activity and measurable outcome, is exactly what CX measurement needs. Too many transformation programmes produce outputs without demonstrating that those outputs changed anything for customers. The standard should be: can you show, with evidence, that the experience improved? Not: can you show that the technology was deployed?

One useful framework is to track three things before and after any significant digital investment: how easy customers find it to complete the task they came to do, how quickly issues get resolved when something goes wrong, and whether overall satisfaction with the brand is trending in the right direction. Those three signals, customer effort, resolution speed, and relationship health, give you a reasonably honest read on whether transformation is producing experience improvement or just operational change.

The Businesses Getting This Right

The pattern I see in businesses that are genuinely improving customer experience through digital transformation is consistent across sectors. They start with a specific customer problem rather than a technology solution. They measure outcomes rather than outputs. They treat CX as a cross-functional responsibility rather than something owned by a single team. And they iterate based on evidence rather than assumption.

That sounds straightforward. In practice, it requires a level of organisational discipline that most businesses find genuinely difficult to maintain, especially when there is pressure to show transformation progress quickly. The temptation to declare success based on technology deployment rather than experience improvement is real, and it is one of the main reasons transformation programmes produce impressive internal metrics and disappointing customer outcomes.

Understanding how the customer experience maps to digital touchpoints is also increasingly important. Mapping the customer experience with modern tools has become more sophisticated, giving teams a clearer picture of where digital investment will have the most impact on experience. The businesses doing this well are not just mapping the experience as it currently exists. They are identifying where the experience breaks down and designing digital solutions specifically to fix those breaks.

The broader point is that digital transformation is not a destination. It is a continuous process of improving how a business understands and responds to its customers. The companies that treat it as a project with a defined end date tend to find that whatever they built is already becoming obsolete by the time it launches. The ones building genuine competitive advantage through CX treat it as an ongoing capability, something they are always getting better at, rather than something they have completed.

There is more on the strategic and cultural dimensions of building that kind of sustained CX capability across the Customer Experience section of The Marketing Juice, covering everything from measurement frameworks to what effective CX leadership actually looks like in practice.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.