Marketing Campaign ROI: How to Calculate It From User Data

Calculating the ROI of a marketing campaign from user data means comparing the revenue (or measurable value) generated by a campaign against its total cost, using behavioural and conversion data to trace the path from exposure to outcome. The formula is straightforward: (Revenue Attributed to Campaign, minus Campaign Cost) divided by Campaign Cost, expressed as a percentage. What makes it complicated is not the maths, it is deciding which data to trust, what to attribute, and where the boundaries of a campaign actually sit.

Most marketers get the formula right and the inputs wrong. That is where the real work is.

Key Takeaways

- The ROI formula is simple. The hard part is deciding which user data to include and which attribution model to apply before you run the numbers.

- Last-click attribution consistently overstates the value of lower-funnel channels and understates the contribution of awareness and mid-funnel activity.

- Incrementality testing, not attribution modelling, is the most honest way to understand whether a campaign actually drove a result or just showed up near one.

- Customer lifetime value should sit inside your ROI calculation wherever possible. A campaign that acquires low-LTV customers at a positive short-term ROI can still be a bad investment.

- The goal is honest approximation, not false precision. A directionally correct ROI number, consistently measured, is more useful than a precise number built on shaky assumptions.

In This Article

- Why Most Campaign ROI Numbers Are Wrong Before You Start

- What User Data Do You Actually Need?

- The ROI Formula and How to Apply It Properly

- Attribution Models and Why They Change Your Answer

- Incorporating Customer Lifetime Value Into Campaign ROI

- Incrementality Testing: The Most Honest Measurement You Can Do

- Building a Campaign ROI Framework That Is Actually Usable

- The Honest Limits of Campaign ROI as a Metric

Why Most Campaign ROI Numbers Are Wrong Before You Start

Early in my career I ran performance marketing for a mid-sized agency and I was very good at reporting impressive ROI numbers. The problem, which I did not fully appreciate at the time, was that a meaningful chunk of those numbers were not real. We were capturing intent that already existed. Someone was going to buy anyway, they searched, they clicked our paid ad, we took the credit. The ROI looked extraordinary. The incremental value was considerably more modest.

This is not a confession unique to me. It is structurally baked into how most digital attribution works. When you measure campaign ROI using last-click or even linear attribution, you are not measuring what the campaign caused. You are measuring what happened while the campaign was running, and then deciding how much of it to assign to each touchpoint. Those are very different things.

Before you open a spreadsheet, you need to decide what question you are actually trying to answer. Are you asking whether this campaign generated more revenue than it cost? Or are you asking whether this campaign generated revenue that would not have happened without it? The first question is easier to answer. The second question is the one that matters.

If you are serious about go-to-market effectiveness and growth strategy, the broader context for how campaigns sit inside a commercial plan matters as much as the measurement mechanics. The Go-To-Market and Growth Strategy hub covers that territory in depth.

What User Data Do You Actually Need?

The phrase “user data” covers a wide range of inputs. For ROI calculation purposes, you are primarily working with four categories.

Behavioural data tells you what users did: pages visited, time on site, content consumed, product pages viewed, add-to-cart events, form completions. This is the foundation of funnel analysis and it tells you where users dropped off or progressed.

Conversion data tells you what outcomes occurred: purchases, sign-ups, qualified leads, trial activations, subscription starts. This is where revenue gets attached to user actions. The quality of this data depends entirely on how cleanly your tracking is set up. Missed conversions and duplicate conversions are both common and both distort your ROI calculation in opposite directions.

Attribution data tells you which touchpoints preceded a conversion. This is where most of the interpretive complexity lives. A user might have seen a display ad, clicked a retargeting ad two days later, and then converted via a branded search. Which campaign gets the credit? The answer depends on your attribution model, and different models will give you materially different ROI figures for the same campaign.

Customer value data tells you what a converted user is actually worth. This is the most underused input in campaign ROI calculations. A campaign that drives 500 first-time buyers looks different depending on whether those buyers have an average lifetime value of £80 or £800. If you are calculating ROI on a single transaction without factoring in downstream value, you are working with an incomplete picture.

The ROI Formula and How to Apply It Properly

The standard formula is: ROI = (Revenue Attributed to Campaign, minus Total Campaign Cost) / Total Campaign Cost x 100.

Total campaign cost should include everything: media spend, agency or platform fees, creative production, any technology costs associated with running the campaign, and a reasonable allocation of internal time if your team spent significant hours on it. I have seen campaigns report positive ROI that would have been negative if the creative retainer and the hours spent in briefing sessions had been included. Cost discipline in the inputs matters.

Revenue attributed to the campaign is the variable that requires the most judgment. There are several ways to approach it.

Direct attribution works where you have clean tracking: a discount code used exclusively in one campaign, a landing page that receives traffic only from that campaign, or a sign-up flow with a clear UTM parameter trail. In these cases, you can trace revenue to the campaign with reasonable confidence. This is the cleanest scenario and unfortunately not the most common one.

Model-based attribution distributes conversion credit across touchpoints using a rule or algorithm. Last-click, first-click, linear, time-decay, and data-driven models all produce different numbers. If you are using a model, the most important thing is to apply it consistently across campaigns so comparisons are valid. Switching models mid-year, or comparing campaigns measured under different models, produces noise rather than insight.

Incrementality-adjusted attribution attempts to isolate the causal contribution of a campaign by comparing outcomes in exposed versus unexposed groups. This is methodologically more demanding but considerably more honest. If you ran a campaign to a test audience and held back a control group, the difference in conversion rate between the two groups represents the incremental lift the campaign actually drove. That lift, multiplied by the audience size and average order value, gives you a more defensible revenue figure for the numerator of your ROI calculation.

Tools like Crazy Egg’s growth analysis resources and platforms focused on behavioural analytics can help you instrument the user experience well enough to make these calculations more reliable. The instrumentation quality upstream determines the ROI calculation quality downstream.

Attribution Models and Why They Change Your Answer

I spent several years judging the Effie Awards, which are specifically designed to reward marketing effectiveness rather than creative craft. One of the consistent patterns I noticed was how differently teams understood their own results depending on how they had set up attribution. Two campaigns with almost identical commercial outcomes would report wildly different ROI figures because one team was running last-click and the other was running data-driven attribution across a longer window.

Last-click attribution assigns 100% of the conversion credit to the final touchpoint before purchase. It is simple, widely used, and systematically biased toward lower-funnel channels. Paid search and retargeting tend to sit at the bottom of the funnel, close to conversion, and they collect disproportionate credit under last-click models. Awareness campaigns, social content, and email nurture sequences that genuinely moved a prospect through the funnel get very little credit. This creates a measurement environment where upper-funnel investment looks expensive and lower-funnel investment looks efficient, regardless of what is actually driving growth.

First-click attribution has the opposite bias. It rewards the channel that first introduced the customer to the brand, which tends to inflate the apparent ROI of prospecting and awareness campaigns.

Linear attribution distributes credit equally across all touchpoints. Time-decay models give more credit to touchpoints closer to conversion. Data-driven models, available in platforms like Google Analytics 4, use machine learning to assign fractional credit based on observed patterns in your own conversion data. Data-driven is generally more accurate than rule-based models, but it requires sufficient conversion volume to produce reliable outputs, typically several hundred conversions per month as a minimum.

The practical implication: when you report campaign ROI, state clearly which attribution model produced the number. A figure without that context is not comparable to any other figure, and it cannot be reliably used to make budget allocation decisions.

Incorporating Customer Lifetime Value Into Campaign ROI

When I was running an agency that had grown from around 20 people to close to 100 over a few years, one of the things that changed my thinking about campaign performance was watching clients make acquisition decisions based purely on first-transaction ROI. They would optimise campaigns toward the cheapest converted customer, hit their cost-per-acquisition targets, and then wonder why revenue growth was stalling. The campaigns were acquiring customers efficiently. They were acquiring the wrong customers efficiently.

Customer lifetime value (CLV or LTV) is the total revenue you expect to generate from a customer over the full duration of their relationship with your business, minus the cost to serve them. Incorporating LTV into campaign ROI changes the calculation materially.

The adjusted formula becomes: ROI = (LTV of Customers Acquired by Campaign, minus Total Campaign Cost) / Total Campaign Cost x 100.

This requires you to segment your converted customers by acquisition source and track their subsequent behaviour. Not every business has the data infrastructure to do this cleanly, but even a rough LTV estimate by customer cohort is more useful than ignoring downstream value entirely. If you know that customers acquired through one channel have a 12-month retention rate of 60% and customers acquired through another have a rate of 30%, that information should influence how you evaluate the ROI of each campaign, not just the first-purchase economics.

BCG’s work on understanding customer financial behaviour and evolving needs touches on this same principle in a financial services context: the value of a customer relationship is not visible in a single transaction, and marketing decisions made on short-term conversion data alone tend to underserve long-term growth.

Incrementality Testing: The Most Honest Measurement You Can Do

If there is one measurement practice I would push every marketing team toward, it is incrementality testing. Not because it is easy, it is not, but because it is the only method that gets close to answering the question that actually matters: did this campaign cause something to happen that would not have happened otherwise?

The basic structure of an incrementality test involves splitting your target audience into two groups. One group is exposed to the campaign. The other group, the holdout or control group, is not. You then compare conversion rates, revenue, or whatever outcome you are measuring between the two groups over the campaign period. The difference between them, adjusted for any baseline differences in the groups, represents the incremental impact of the campaign.

Major advertising platforms including Meta and Google offer built-in conversion lift studies that operate on this principle. They are not perfect, but they are considerably more informative than attribution models alone, particularly for upper-funnel campaigns where the path to conversion is long and multi-touch.

The practical challenge is that holdout testing requires you to deliberately withhold your campaign from a portion of your audience, which creates short-term opportunity cost. For most businesses running at scale, that cost is worth paying periodically to validate that your attribution model is not simply flattering your existing spend allocation. I have seen businesses where incrementality testing revealed that a significant portion of their attributed conversions were happening in the holdout group too, meaning the campaign was capturing intent that existed independently of the marketing activity. That is a commercially important finding, and you will not find it by looking at attribution data alone.

Resources like Semrush’s overview of growth tools highlight the range of analytics and testing platforms available to support this kind of measurement. The tooling has improved considerably. The willingness to use it honestly is the limiting factor in most organisations.

Building a Campaign ROI Framework That Is Actually Usable

Measurement frameworks fail in practice when they are too complex to maintain or too disconnected from how decisions actually get made. I have worked with businesses that had elaborate attribution setups they trusted completely and others that were making nine-figure media decisions on gut instinct and a few KPIs that nobody had challenged in years. Neither extreme serves the business well.

A usable ROI framework for campaign measurement has four components.

A defined measurement period. Decide in advance how long after campaign end you will measure attributed conversions. For short purchase cycles, 7 to 14 days post-exposure may be sufficient. For considered purchases or B2B sales cycles, you may need 30, 60, or 90 days. Changing the window after the fact to improve the numbers is a form of measurement theatre that produces nothing useful.

A consistent attribution model. Pick one and apply it uniformly. Document which model you are using and why. If you change models, restate historical data under the new model before making comparisons.

A cost definition that includes everything. Media spend is the floor, not the ceiling. Include production, technology, agency fees, and a reasonable internal time allocation. If you are not including all costs, you are not calculating ROI, you are calculating something more flattering that does not have a useful name.

A benchmark for comparison. An ROI figure in isolation tells you almost nothing. You need a baseline: what is the ROI of doing nothing (organic conversion rate), what is the historical ROI of similar campaigns, and what is the cost of capital or the opportunity cost of deploying budget elsewhere? ROI only becomes actionable when it is compared to an alternative.

Vidyard’s research into pipeline and revenue potential for GTM teams points to a consistent gap between the revenue data teams think they have and the revenue data they actually have. That gap is not a technology problem. It is a measurement discipline problem, and it starts with how campaigns are instrumented and evaluated from day one.

The broader question of how campaign measurement connects to growth strategy and commercial planning is something I cover across the Go-To-Market and Growth Strategy section of The Marketing Juice. If you are building out a measurement approach for a new market entry or a product launch, the strategic context matters as much as the technical mechanics.

The Honest Limits of Campaign ROI as a Metric

There is a version of marketing that treats ROI as the only number that matters and optimises relentlessly toward it. I understand the appeal. It is clean, it is defensible in a boardroom, and it creates the impression of rigour. The problem is that campaigns optimised purely for short-term measurable ROI tend to underinvest in the things that drive long-term growth: brand building, new audience development, and the kind of sustained visibility that creates demand rather than just capturing it.

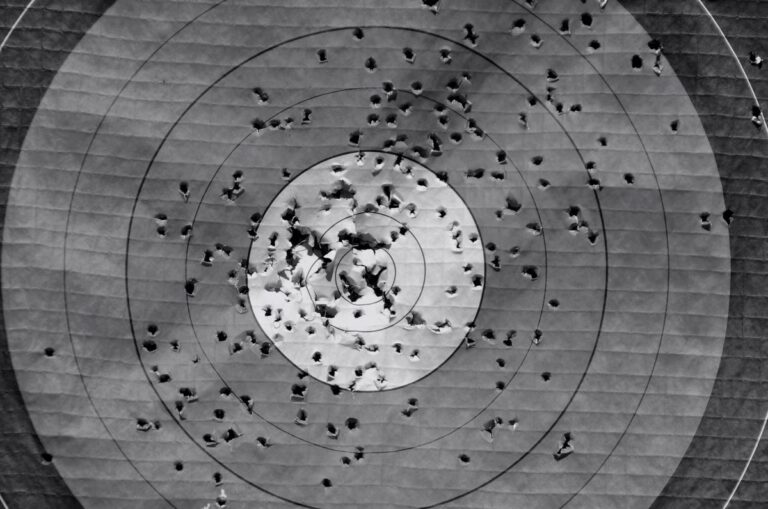

Think about the mechanics of how customers actually come to buy something. Someone encounters a brand multiple times across different contexts before they are ready to purchase. When they are ready, they search, they click, they convert. The last click gets the credit. But the work that made them ready to buy, the awareness campaign, the content they read six weeks ago, the recommendation from a peer, that work is largely invisible in a standard ROI calculation. Measuring only what is easy to measure creates a systematic bias toward channels that operate close to conversion and away from channels that build the conditions for conversion.

BCG’s research on scaling agile organisations makes a point that applies directly here: measurement systems shape behaviour, and behaviour shapes outcomes. If your ROI framework only rewards short-term, attributable results, your team will optimise for short-term, attributable results. Whether that is the right thing to optimise for is a strategic question, not a measurement question.

ROI is a useful input to decision-making. It is not a substitute for judgment about what kind of growth a business is trying to achieve, over what time horizon, and through what mix of demand capture and demand creation. The number matters. The context around the number matters more.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.