AI Does the Work. Humans Do the Connecting.

Marketers balancing AI and human connection in 2025 are facing a tension that is less about technology and more about trust. AI handles volume, speed, and personalisation at scale. What it cannot do is make someone feel genuinely understood. The marketers getting this right are not choosing between the two. They are building systems where AI does the heavy lifting and humans show up where it actually matters.

That sounds straightforward. In practice, most teams are still working out where the line is.

Key Takeaways

- AI and human connection are not in competition. The strongest marketing strategies in 2025 treat them as complementary, not interchangeable.

- Personalisation at scale is only valuable when it reflects something real about the customer. Generic AI output dressed up as personal is worse than no personalisation at all.

- The moments that build brand loyalty, handling complaints, celebrating milestones, responding in a crisis, still require human judgment and human tone.

- Most teams are under-investing in the brief, not the tool. Better AI output starts with clearer human thinking about audience, context, and intent.

- The marketers who will win over the next two years are not the ones who automate the most. They are the ones who automate the right things and protect the human moments that drive retention.

In This Article

- Why This Tension Exists in the First Place

- Where AI Is Genuinely Earning Its Place

- Where Human Connection Still Cannot Be Automated

- The Personalisation Trap Most Teams Are Falling Into

- How the Best Teams Are Structuring the Division of Labour

- The Brand Voice Problem Nobody Is Talking About Enough

- What This Means for Marketing Teams in Practice

Why This Tension Exists in the First Place

I have been in agency environments long enough to remember when personalisation meant adding a first name to an email subject line and calling it a win. We thought we were being clever. Customers were not fooled then, and they are less fooled now.

The difference in 2025 is that AI can generate genuinely tailored content, at scale, faster than any team could manage manually. That is a real capability shift. But it has also created a new problem: the volume of personalised-looking content has increased dramatically, which means the bar for what feels genuinely personal has risen in parallel. Customers have developed sharper instincts for what is real and what is automated. When AI gets it wrong, the gap is more visible than it used to be.

This is the core tension. AI makes personalisation cheaper and faster. But cheaper and faster does not automatically mean more human. And in a market where brand trust is one of the few remaining differentiators, the difference between content that feels considered and content that feels generated matters more than most marketing dashboards will tell you.

If you want to understand how AI is reshaping the broader marketing landscape, the AI Marketing hub at The Marketing Juice covers the full picture, from strategy to tooling to commercial impact.

Where AI Is Genuinely Earning Its Place

There are areas where AI has moved from experimental to essential, and the evidence is in the output, not the vendor decks.

Content production is the obvious one. Teams that used to spend three days producing a content calendar are now doing it in an afternoon. That is not hyperbole. The data from Semrush on generative AI adoption among marketers shows that content creation is consistently the top use case, and the productivity gains are real. The question is whether the content is any good, which brings us back to the brief.

I spent years watching junior account managers produce mediocre work not because they lacked effort but because they had been given a mediocre brief. The same dynamic applies to AI. If you feed a tool a vague instruction with no audience context, no tone guidance, and no clear objective, you will get vague output. The tool is not the problem. The thinking upstream of the tool is the problem.

Beyond content, AI is proving its value in audience segmentation, predictive modelling, and campaign optimisation. When I was managing large paid search budgets, the manual work of bid adjustments across hundreds of ad groups was relentless. AI handles that now with a precision that no human team could sustain at scale. Ahrefs has covered how AI is changing SEO workflows in ways that free up strategists to focus on the decisions that actually require judgment rather than the tasks that just require consistency.

These are the right use cases: high-volume, pattern-based, data-dependent tasks where speed and consistency matter more than nuance. The mistake is assuming that because AI works well there, it works equally well everywhere.

Where Human Connection Still Cannot Be Automated

There is a category of marketing moments where automation is not just ineffective. It is actively damaging.

Crisis response is the clearest example. When a brand faces a public problem, whether it is a product failure, a service breakdown, or a reputational issue, the speed of the response matters less than the quality of the judgment behind it. I have seen brands deploy automated responses in moments that required a human voice and watched the situation deteriorate because the tone was wrong. An AI can produce a grammatically correct apology. It cannot read the room.

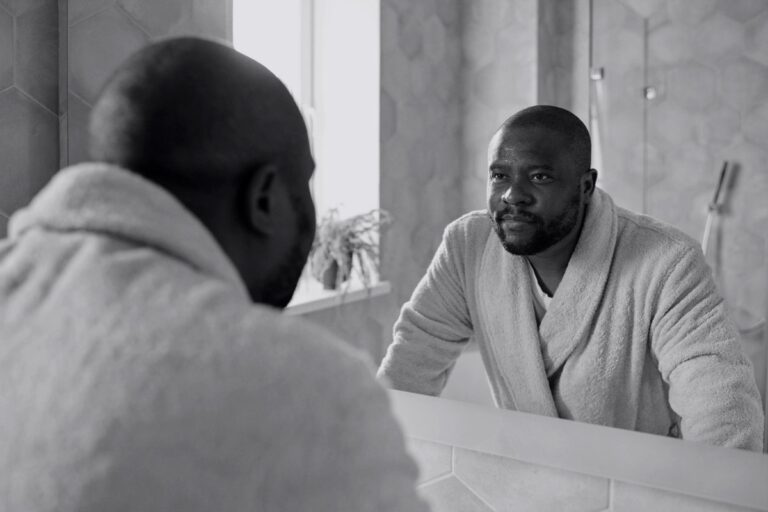

High-value customer relationships are another area where the human layer is non-negotiable. In B2B particularly, the moments that cement long-term client relationships are rarely the ones that happen in automated workflows. They happen in conversations, in the follow-up after a difficult meeting, in the account manager who remembers something specific about a client’s business and references it unprompted. That kind of attention cannot be templated.

Community management sits in a similar category. Brands that have built genuine communities, not just follower counts, have done so through consistent human presence. The responses that build loyalty are the ones that feel like a real person took a moment to engage, not a bot cycling through approval queues. Mailchimp’s guidance on humanising AI content makes the point well: the goal is not to hide that AI was involved but to ensure the output reflects genuine human intent.

There is also a category I think about as inflection moments, the points in a customer relationship where something significant has happened. A long-term customer who has just had a bad experience. A prospect who has been in consideration for six months and is close to a decision. These are not moments for automated nurture sequences. They are moments that require a human to pick up the phone or write a message that actually says something.

The Personalisation Trap Most Teams Are Falling Into

Personalisation has been a marketing priority for the better part of a decade. AI has made it cheaper to execute. But cheaper execution of a flawed strategy is still a flawed strategy.

The trap goes like this. A team adopts an AI content tool. They use it to produce personalised email sequences, dynamic website copy, and tailored social content. Output volume increases significantly. Open rates and click rates look reasonable. But conversion rates and retention metrics stay flat, or decline slightly. The team concludes the tool is underperforming and starts testing different tools. The real issue is that the personalisation was surface-level. It used behavioural signals to adjust content variables, first name, product category, last purchase, but it did not reflect anything genuinely meaningful about the customer’s situation.

Customers notice the difference between content that was tailored for them and content that was tailored for someone who looks like them on a spreadsheet. The former builds trust. The latter erodes it slowly, in ways that are hard to attribute to a single campaign or touchpoint.

The fix is not a better AI tool. It is better data strategy and better editorial judgment. What do you actually know about this customer that is worth acting on? What would make them feel seen rather than tracked? Those are human questions that need human answers before any tool enters the picture. HubSpot’s breakdown of AI copywriting tools is useful for understanding what these tools can and cannot do, but the strategic layer sits above the tooling entirely.

How the Best Teams Are Structuring the Division of Labour

The teams I have seen handle this well are not the ones with the most sophisticated AI stack. They are the ones with the clearest thinking about what each part of the system is for.

A useful framework is to think in three layers. The first is the production layer: content creation, scheduling, A/B testing, bid management, reporting. This is where AI earns its place. The volume is high, the patterns are learnable, and human time is genuinely better spent elsewhere.

The second is the strategy layer: audience insight, campaign architecture, channel mix, messaging hierarchy. AI can inform this layer with data and pattern recognition, but the decisions require human judgment. Someone needs to look at the data and ask whether it is telling the full story. I have spent enough time with analytics dashboards to know that they show you what happened, not why it happened. That distinction matters enormously when you are making resource allocation decisions.

The third is the relationship layer: key account management, community engagement, crisis response, brand voice stewardship. This layer should be almost entirely human. Not because AI cannot produce output here, it can, but because the cost of getting it wrong is high and the value of getting it right is significant.

When I was growing a team from around 20 people to close to 100, one of the things I learned was that the biggest productivity gains rarely came from adding more people to production tasks. They came from being clearer about which tasks required senior judgment and protecting the time for that. The same logic applies to AI integration. The goal is not to automate as much as possible. It is to automate the right things so that human attention goes where it creates the most value.

The Brand Voice Problem Nobody Is Talking About Enough

There is a quieter issue sitting underneath most of the AI-versus-human-connection debate, and it is brand voice.

Brand voice is not a style guide. It is a set of instincts about how a brand thinks, what it cares about, what it would and would not say, and how it responds when things are not going well. It takes years to build and can be undermined quickly by volume-produced content that is technically correct but tonally off.

AI tools trained on broad datasets will produce content that sounds like average marketing. If your brand voice is anything other than average, you have a problem. The solution is not to avoid AI but to invest seriously in the inputs: detailed voice documentation, curated examples, clear guidance on what the brand would not say. Moz’s analysis of AI content creation touches on this, noting that the quality ceiling for AI content is largely determined by the quality of the guidance it receives.

I have reviewed a lot of brand content over the years, both as an agency leader and as an Effie judge. The campaigns that stand out are not the ones with the biggest budgets or the most sophisticated targeting. They are the ones where the brand has a clear, consistent point of view that comes through in everything, from the headline to the small print. That kind of coherence is a human achievement. AI can help produce content at scale, but the coherence has to be designed and protected by people.

What This Means for Marketing Teams in Practice

The practical implication is not a technology decision. It is an organisational one.

Teams that are getting this right have done a few things consistently. They have audited their customer experience to identify the moments where human presence creates disproportionate value, and they have protected those moments from automation pressure. They have invested in prompt engineering and briefing quality, not as a technical skill but as a strategic one. They have built feedback loops between AI-produced content and customer response data, so they can identify when the tone is drifting or the personalisation is becoming hollow.

They have also been honest about what they do not know yet. The tools are evolving quickly, and the right balance in 2025 will not be the right balance in 2027. Semrush’s work on AI-assisted SEO is a good example of how the tooling is becoming more sophisticated, which means the strategic questions become more important, not less.

Early in my career, when I could not get budget for a proper website, I taught myself to code and built one. The point was not that the self-built version was better than a professionally built one. It was that solving the problem required understanding it from the inside. The same instinct applies here. Marketers who want to get the AI-human balance right need to work with these tools closely enough to understand what they are actually good at, not just what the vendor says they are good at.

There is more on how AI is reshaping marketing operations across the full stack in the AI Marketing section of The Marketing Juice, including coverage of where the real commercial opportunities are in 2025 and where the risks are being underestimated.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.