A Unified Data Model Is the Foundation of Honest Marketing Analytics

A unified data model gives marketing teams a single, consistent framework for measuring performance across every channel, campaign, and platform. Instead of reconciling five different definitions of “conversion” from five different tools, everyone works from the same logic, the same metrics, and the same source of truth.

Without it, you don’t have a data problem. You have a trust problem. And no dashboard fixes that.

Key Takeaways

- A unified data model eliminates the version-of-truth problem that causes marketing teams to spend more time arguing about numbers than acting on them.

- Most analytics fragmentation isn’t a technology failure, it’s a governance failure. Tools proliferate, definitions drift, and no one owns the logic.

- Unifying your data model doesn’t require a data warehouse or a six-figure implementation. It starts with agreeing on definitions and enforcing them consistently.

- The goal isn’t perfect data. It’s directionally reliable data that everyone trusts enough to make decisions from.

- Channel-specific attribution models are a primary source of inflated performance claims. A unified model forces honest reconciliation across the whole funnel.

In This Article

- Why Marketing Teams End Up With Five Versions of the Same Number

- What a Unified Data Model Actually Means in Practice

- The Governance Problem Nobody Wants to Talk About

- How Attribution Models Break Without a Unified Foundation

- The Role of GA4 in a Unified Measurement Architecture

- Building Toward a Single Source of Truth Without a Six-Figure Project

- What Changes When You Have a Unified Model

- The Honest Limitation You Need to Accept

Why Marketing Teams End Up With Five Versions of the Same Number

Here is a scenario that plays out in almost every marketing organisation above a certain size. The paid media team reports 400 conversions from last month. The CRM shows 280 new customers. Finance has 210 closed deals. And GA4 is showing something else entirely depending on which attribution model you have selected.

Everyone is technically correct. And that is the problem.

Each tool is measuring from its own vantage point, using its own definitions, applying its own logic. Google Ads counts a conversion when someone clicks an ad and completes a tracked action within the lookback window. GA4 counts a conversion based on session data and the attribution model configured in the property settings. The CRM counts a contact when someone fills in a form and the integration fires correctly. Finance counts revenue when the deal closes and the invoice is paid.

None of these is wrong. But none of them is the whole picture either. And when you present them side by side without a unifying framework, you get a room full of people defending their number rather than interrogating what actually happened.

I’ve sat in those rooms. At one agency I ran, we had a client who was convinced their email channel was underperforming because the email platform showed low click-to-conversion rates. But when we mapped email touchpoints across the full customer experience in a unified model, email was appearing in over 60% of paths to purchase, usually in the middle of the funnel. The channel wasn’t underperforming. It was being measured from the wrong angle.

That kind of misread happens constantly when teams lack a shared data model. And it has real commercial consequences, because budget decisions follow the numbers that get reported, not the ones that stay buried in a tool nobody checks.

What a Unified Data Model Actually Means in Practice

The phrase “unified data model” sounds architectural and expensive. Sometimes it is. But at its core, it means something much simpler: everyone in the organisation agrees on what things mean, how they are measured, and where the authoritative number lives.

That covers three things.

First, definitions. What counts as a conversion? What is a session? What qualifies as an active user? These seem obvious until you realise that GA4’s definition of an active user differs from how your paid social platform counts reach, which differs from how your email platform counts engagement. Understanding how GA4 defines users is a useful starting point, but the definitions still need to be documented, agreed upon, and applied consistently across every tool your team uses.

Second, ownership. Someone has to be responsible for maintaining the model. Not just setting it up once and moving on, but auditing it regularly, catching implementation drift, and flagging when a new tool or campaign type doesn’t fit the existing framework. Without ownership, the model degrades quietly over time until the numbers stop making sense and nobody can explain why.

Third, a single reporting layer. This doesn’t have to be a full data warehouse, though at scale that is often the right answer. It can be a well-structured Looker Studio dashboard pulling from a single GA4 property with consistent UTM tagging and agreed attribution settings. The point is that there is one place where the official numbers live, and that place is not someone’s personal spreadsheet.

If you’re building or refining your analytics practice, the broader Marketing Analytics hub covers the full stack, from GA4 configuration to attribution modelling to reporting frameworks that hold up under commercial scrutiny.

The Governance Problem Nobody Wants to Talk About

Most analytics fragmentation is not a technology failure. The tools are capable of producing coherent, consistent data. The failure is almost always a governance failure.

Tools get added to the stack without a proper integration plan. Campaign naming conventions get created by one person and ignored by the next. UTM parameters are applied inconsistently because there is no enforced standard. Someone rebuilds the GA4 property mid-year and the historical data becomes incomparable. A new agency starts running paid search and uses their own conversion tracking without aligning to the existing model.

Each of these is a small failure in isolation. Together, they produce data that is technically present but practically useless for any kind of cross-channel or longitudinal analysis.

When I was growing an agency from around 20 people to over 100, one of the most important things we did was build a shared measurement framework that every new client account had to follow from day one. Not because we were obsessive about process, but because we had learned the hard way what happens when you don’t. You end up six months into a campaign unable to answer a basic question like “which channel is driving the most efficient cost per acquisition” because the data from each channel is structured differently and can’t be compared.

Governance is boring. It doesn’t get presented at industry events. But it is the difference between analytics that drive decisions and analytics that generate slide decks.

Forrester has written about this tension between the appeal of sophisticated analytics tools and the more mundane work of making sure the underlying data is trustworthy. Their warning about black-box analytics models is worth reading, particularly the point that complexity in the model doesn’t compensate for weakness in the data feeding it.

How Attribution Models Break Without a Unified Foundation

Attribution is the area where the absence of a unified data model does the most damage, because attribution models are only as honest as the data they operate on.

Every major ad platform runs its own attribution model, and every one of them is designed to make that platform look as valuable as possible. Google Ads will show you data-driven attribution that credits Google touchpoints generously. Meta will show you 7-day click, 1-day view attribution that captures a wide range of conversions. Each platform is telling you a story about its own contribution, and those stories don’t add up to 100% of your actual revenue. They add up to several hundred percent, because every platform is claiming credit for the same conversions.

A unified data model doesn’t eliminate this problem, but it forces you to confront it honestly. When you pull all channel data into a single model with a consistent attribution logic, the inflated numbers from individual platforms become visible. You can see where the overlap is. You can make a more considered judgment about which touchpoints are genuinely driving incremental value rather than simply appearing in the path to purchase.

I judged the Effie Awards for several years, and one pattern I noticed consistently was that the strongest entries were not the ones with the most impressive platform-reported ROAS. They were the ones where the team had done the harder work of understanding incrementality, of asking what would have happened without this activity, and of presenting evidence that was grounded in a coherent measurement framework rather than a collection of best-case platform metrics.

That kind of rigour starts with a unified data model. You cannot answer incrementality questions honestly when your data is fragmented across tools that each have an interest in telling you they are indispensable.

The Role of GA4 in a Unified Measurement Architecture

GA4 is a capable tool, but it is not, by itself, a unified data model. It is one component of one. Understanding the distinction matters.

GA4 gives you event-based tracking across web and app, a flexible data model that can be extended with custom dimensions and metrics, and integration with Google’s broader ecosystem including BigQuery for raw data export. That is genuinely useful infrastructure. But GA4 still has the same fundamental limitations that every analytics tool has: it is a perspective on user behaviour, not a complete record of it.

Referrer data gets lost. Bot traffic inflates session counts in ways that are hard to fully filter. Cross-device journeys remain difficult to stitch together accurately without a strong identity resolution approach. Implementation quirks, particularly around consent mode and cookieless measurement, mean that the numbers you see are modelled estimates in a growing proportion of cases, not exact counts.

This is not a criticism of GA4 specifically. It is a description of the reality of web analytics in 2026. Using GA4 data to inform content strategy is genuinely valuable, but only if you are treating the data as directional signal rather than precise measurement. The moment you start making major budget decisions based on exact GA4 numbers without cross-referencing other signals, you are building on shakier ground than you probably realise.

What GA4 can do well within a unified model is serve as the central behavioural data layer, with consistent event naming, a clean taxonomy of conversion actions, and BigQuery integration that allows you to join GA4 data with CRM data, revenue data, and other sources. That is where GA4 earns its place in the stack, not as a standalone oracle, but as one well-configured layer in a broader architecture.

Getting UTM tagging right is foundational to making any of this work. Consistent keyword and campaign tracking in GA4 is the kind of unglamorous work that separates teams with reliable attribution from teams that are guessing.

Building Toward a Single Source of Truth Without a Six-Figure Project

The phrase “single source of truth” has been so thoroughly adopted by enterprise software vendors that it now carries an implicit price tag. The assumption is that you need a data warehouse, a CDP, a team of data engineers, and a six-month implementation before you can have coherent marketing data.

That is not accurate. The tools help, but the work is primarily conceptual and organisational, not technical.

Start with a measurement plan. Document every metric that matters to your business, define exactly how each one is calculated, and specify which tool is the authoritative source for each. If revenue is the authoritative number, it comes from finance, not from GA4 or your ad platform. If leads are the authoritative number, they come from the CRM, not from form submission events in your analytics tool. This sounds obvious. Most organisations have never written it down.

Then enforce consistent UTM tagging across every channel. This is the single highest-leverage action most marketing teams can take to improve their data quality. Without it, GA4 cannot reliably attribute traffic to the right source, and your channel-level analysis will be systematically wrong. A shared UTM taxonomy, documented in a spreadsheet and enforced through a campaign launch checklist, costs nothing and fixes a disproportionate share of attribution problems.

From there, build a reporting layer that pulls from your agreed authoritative sources. A well-structured marketing dashboard, whether in Looker Studio, Tableau, or even a well-maintained Google Sheet, can serve as a functional single source of truth for a team of any size. The principles of a good marketing dashboard apply regardless of the tool: consistent definitions, clear ownership, and a layout that connects activity to outcomes rather than just reporting activity.

The early work here is worth doing carefully. As this piece on analytics preparation argues, the failure mode in web analytics is almost always a planning failure rather than a tool failure. The tools are ready. The question is whether you have done the groundwork to use them coherently.

What Changes When You Have a Unified Model

The most immediate change is that meetings get shorter. When everyone is working from the same numbers, you stop spending the first twenty minutes of every performance review debating which figure is correct. That time goes toward actually discussing what the numbers mean and what to do about them.

The more consequential change is that budget decisions become more defensible. When you can show, from a single coherent model, that one channel is generating leads at half the cost of another with comparable downstream conversion rates, the case for reallocation is hard to argue with. When your data is fragmented, every channel owner can find a metric that makes their channel look good, and the budget conversation becomes political rather than analytical.

There is also a compounding effect over time. A unified model that is consistently maintained produces longitudinal data that is actually comparable. You can look at performance this quarter against the same quarter two years ago and trust that you are comparing like with like. That kind of historical analysis is extremely valuable for understanding seasonality, the long-term impact of brand investment, and the trajectory of channel efficiency over time.

BCG’s work on data and analytics in financial services makes a point that translates directly to marketing: organisations that invest in data infrastructure and governance consistently outperform those that treat data as an afterthought. The competitive advantage isn’t in having more data. It’s in having data you can actually use.

For content-focused teams, the unified model also changes how you evaluate content performance. Content marketing metrics are notoriously hard to connect to business outcomes when each piece of content is measured in isolation using platform-native metrics. Within a unified model, you can start to understand how content contributes to the broader funnel, which pieces are generating qualified traffic that converts, and which are generating volume that goes nowhere.

That is a different, more commercially grounded conversation than the one most content teams are having.

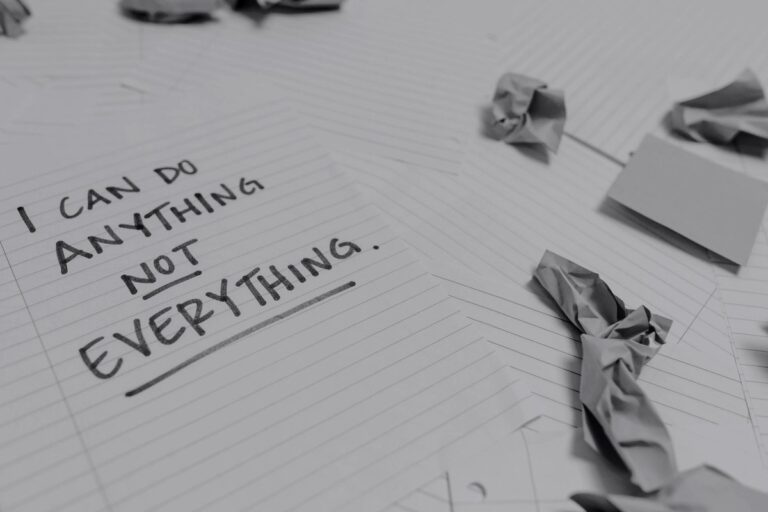

The Honest Limitation You Need to Accept

A unified data model will not give you perfect data. Nothing will. The honest reality of marketing measurement in 2026 is that a meaningful and growing proportion of user behaviour is untrackable, whether because of consent choices, browser restrictions, ad blockers, or the inherent limitations of cross-device identity resolution.

The goal is not perfect measurement. The goal is honest approximation. A unified model gives you a consistent, transparent framework within which the approximation is made. That is far more valuable than precise-looking numbers that are generated by inconsistent logic across disconnected tools.

I have spent two decades working with analytics data across hundreds of clients and 30 industries. The teams that make the best decisions are not the ones with the most sophisticated tools. They are the ones who understand what their data can and cannot tell them, who treat trends and directional movement as more reliable than exact point-in-time numbers, and who have built enough trust in their measurement framework that they can act on it without spending half their time questioning the inputs.

That trust is built through consistency, governance, and the kind of unglamorous definitional work that rarely gets celebrated. But it is the foundation that everything else sits on.

If you are working through the broader challenge of making your analytics practice more coherent and commercially grounded, the Marketing Analytics section of The Marketing Juice covers the full range of topics, from GA4 configuration to measurement frameworks to the practical realities of attribution in a privacy-first environment.

About the Author

Keith Lacy is a marketing strategist and former agency CEO with 20+ years of experience across agency leadership, performance marketing, and commercial strategy. He writes The Marketing Juice to cut through the noise and share what works.